고민점: 스윙 결과 별로 landmark의 좌표를 통해 각도와 위치로 일치율을 판단해야 한다.

landmark의 좌표는 x, y로 2차원으로 나타나지만 이미지의 전체 크기에 따라 상대적으로 값이 변한다.

landmark의 좌표가 x, y, z로 3차원으로 나타난다. 이미지 크기와 상관없이 skeleton의 크기에 의해 자동으로 resize된다.

| Dimension |

장점 |

단점 |

| 2차원 |

좌표값을 바로 응용할 수 있음 |

이미지 크기에 따라 상대적으로 좌표값이 바뀌는 것을 계산해야 한다. |

| 3차원 |

100% 정확한 값을 얻을 수 있다. |

3차원의 값을 가지고 2차원 이미지에 표시해줘야 한다. |

방안

- 입력 이미지의 사람 크기 통일 (bounding box 이용)

- 3차원 방법

코드 (Colab)

from google.colab import files

uploaded = files.upload()

import cv2

from google.colab.patches import cv2_imshow

import math

import numpy as np

DESIRED_HEIGHT = 480

DESIRED_WIDTH = 480

def resize_and_show(image):

h, w = image.shape[:2]

if h < w:

img = cv2.resize(image, (DESIRED_WIDTH, math.floor(h/(w/DESIRED_WIDTH))))

else:

img = cv2.resize(image, (math.floor(w/(h/DESIRED_HEIGHT)), DESIRED_HEIGHT))

cv2_imshow(img)

# Read images with OpenCV.

images = {name: cv2.imread(name) for name in uploaded.keys()}

# Preview the images.

for name, image in images.items():

print(name)

resize_and_show(image)import mediapipe as mp

mp_pose = mp.solutions.pose

mp_drawing = mp.solutions.drawing_utils

mp_drawing_styles = mp.solutions.drawing_styles

help(mp_pose.Pose)

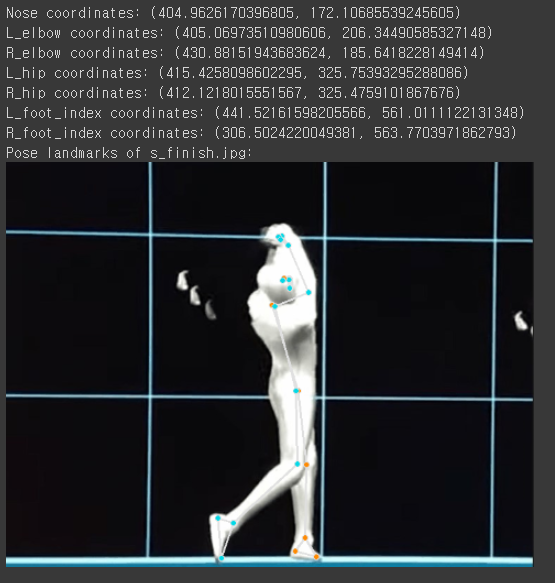

- landmark 2차원 좌표값 계산 후 시각화

# Run MediaPipe Pose and draw pose landmarks.

with mp_pose.Pose(

static_image_mode=True, min_detection_confidence=0.5, model_complexity=2) as pose:

for name, image in images.items():

# Convert the BGR image to RGB and process it with MediaPipe Pose.

results = pose.process(cv2.cvtColor(image, cv2.COLOR_BGR2RGB))

# Print nose landmark.

image_hight, image_width, _ = image.shape

if not results.pose_landmarks:

continue

print(

f'Nose coordinates: ('

f'{results.pose_landmarks.landmark[mp_pose.PoseLandmark.NOSE].x * image_width}, '

f'{results.pose_landmarks.landmark[mp_pose.PoseLandmark.NOSE].y * image_hight})'

)

print(

f'L_elbow coordinates: ('

f'{results.pose_landmarks.landmark[mp_pose.PoseLandmark.LEFT_ELBOW].x * image_width}, '

f'{results.pose_landmarks.landmark[mp_pose.PoseLandmark.LEFT_ELBOW].y * image_hight})'

)

print(

f'R_elbow coordinates: ('

f'{results.pose_landmarks.landmark[mp_pose.PoseLandmark.RIGHT_ELBOW].x * image_width}, '

f'{results.pose_landmarks.landmark[mp_pose.PoseLandmark.RIGHT_ELBOW].y * image_hight})'

)

print(

f'L_hip coordinates: ('

f'{results.pose_landmarks.landmark[mp_pose.PoseLandmark.LEFT_HIP].x * image_width}, '

f'{results.pose_landmarks.landmark[mp_pose.PoseLandmark.LEFT_HIP].y * image_hight})'

)

print(

f'R_hip coordinates: ('

f'{results.pose_landmarks.landmark[mp_pose.PoseLandmark.RIGHT_HIP].x * image_width}, '

f'{results.pose_landmarks.landmark[mp_pose.PoseLandmark.RIGHT_HIP].y * image_hight})'

)

print(

f'L_foot_index coordinates: ('

f'{results.pose_landmarks.landmark[mp_pose.PoseLandmark.LEFT_FOOT_INDEX].x * image_width}, '

f'{results.pose_landmarks.landmark[mp_pose.PoseLandmark.LEFT_FOOT_INDEX].y * image_hight})'

)

print(

f'R_foot_index coordinates: ('

f'{results.pose_landmarks.landmark[mp_pose.PoseLandmark.RIGHT_FOOT_INDEX].x * image_width}, '

f'{results.pose_landmarks.landmark[mp_pose.PoseLandmark.RIGHT_FOOT_INDEX].y * image_hight})'

)

# Draw pose landmarks.

print(f'Pose landmarks of {name}:')

annotated_image = image.copy()

mp_drawing.draw_landmarks(

annotated_image,

results.pose_landmarks,

mp_pose.POSE_CONNECTIONS,

landmark_drawing_spec=mp_drawing_styles.get_default_pose_landmarks_style())

resize_and_show(annotated_image)import math

def get_num():

x1 = results.pose_landmarks.landmark[mp_pose.PoseLandmark.RIGHT_HIP].x * image_width

y1 = results.pose_landmarks.landmark[mp_pose.PoseLandmark.RIGHT_HIP].y * image_hight

x2 = results.pose_landmarks.landmark[mp_pose.PoseLandmark.LEFT_FOOT_INDEX].x * image_width

y2 = results.pose_landmarks.landmark[mp_pose.PoseLandmark.LEFT_FOOT_INDEX].y * image_hight

return [x1, y1, x2, y2]

def cal_rad(arr):

rad = math.atan2(arr[3]-arr[1],arr[2]-arr[0])

return rad

def radTodeg(rad):

PI = math.pi

deg = (rad*180)/PI

print("{0:0.2f}".format(deg))

arr = get_num()

rad = cal_rad(arr)

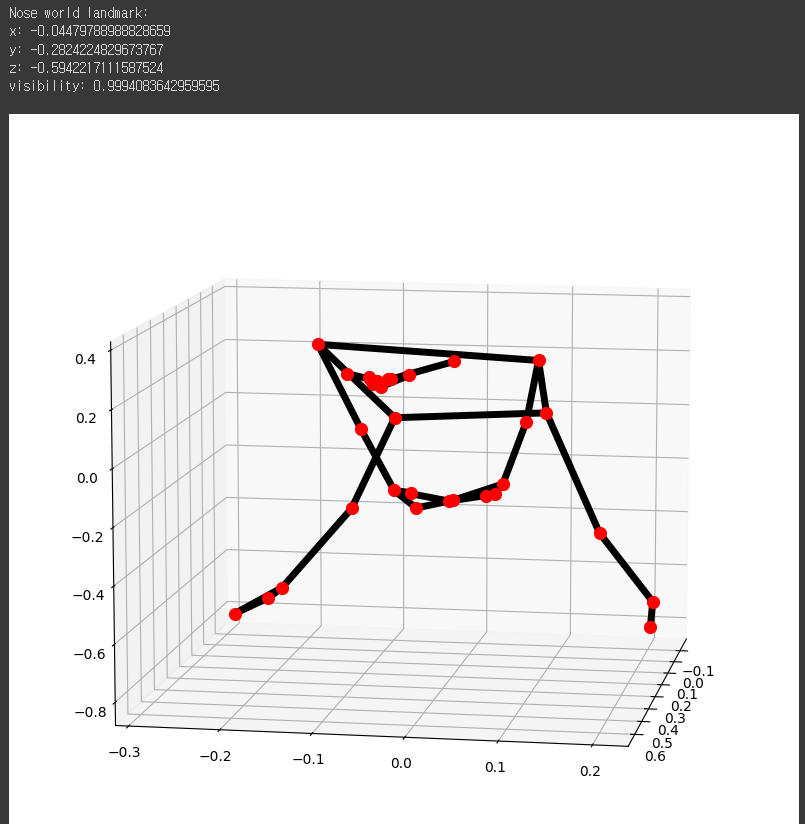

radTodeg(rad)# Run MediaPipe Pose and plot 3d pose world landmarks.

with mp_pose.Pose(

static_image_mode=True, min_detection_confidence=0.5, model_complexity=2) as pose:

for name, image in images.items():

results = pose.process(cv2.cvtColor(image, cv2.COLOR_BGR2RGB))

# Print the real-world 3D coordinates of nose in meters with the origin at

# the center between hips.

print('Nose world landmark:'),

print(results.pose_world_landmarks.landmark[mp_pose.PoseLandmark.NOSE])

# Plot pose world landmarks.

mp_drawing.plot_landmarks(

results.pose_world_landmarks, mp_pose.POSE_CONNECTIONS)

고민점: 스윙 결과 별로 landmark의 좌표를 통해 각도와 위치로 일치율을 판단해야 한다.

landmark의 좌표는 x, y로 2차원으로 나타나지만 이미지의 전체 크기에 따라 상대적으로 값이 변한다.

landmark의 좌표가 x, y, z로 3차원으로 나타난다. 이미지 크기와 상관없이 skeleton의 크기에 의해 자동으로 resize된다.

방안

코드 (Colab)