Abstract

- propose

multi-agent dual learning framework to boost performance of neural machine translation

- dual learning leverages the duality between primal task (

X->Y) and dual task (Y->X)

- SOTA score on WMT 2014 EnDe BLEU : 30.67 (+2.2 compared to Transformer_big)

Details

Introduction

- Dual Learning

- formulated as a two-agent system where primal model learns

f : X -> Y mapping and dual model learns g : Y -> X mapping.

- given

x in X, delta (x, g(f(x))) is the reconstruction loss function used for training signal.

- Theoretically, monolingual corpus is sufficient to learn NMT model in dual learning framework.

- refer to original dual learning paper (Xia et al. 2016) accepted at NIPS2016 for more details

- Multi-agent Dual Learning

- instead of single

f and g, multi agent system uses N - 1 additional agents in each side, pre-trained with parallel corpus via different random seed. Ensemble effect boosts the quality of feedback signal.

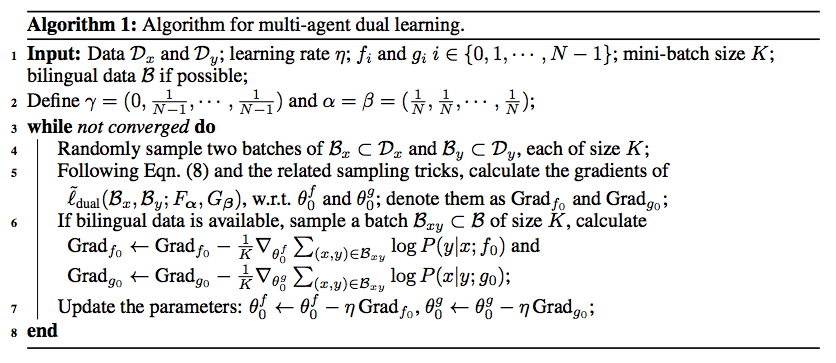

Algorithm

Results

- Experimental Settings

- Model : Transformer Big

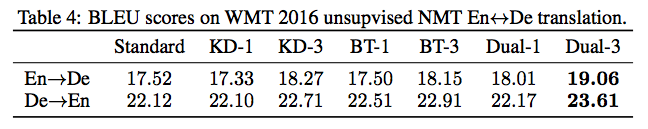

- compare with Knowledge Distillation (KD), Back Translation (BT) and two-agent Dual Learning (Dual) each with single and multi-agent

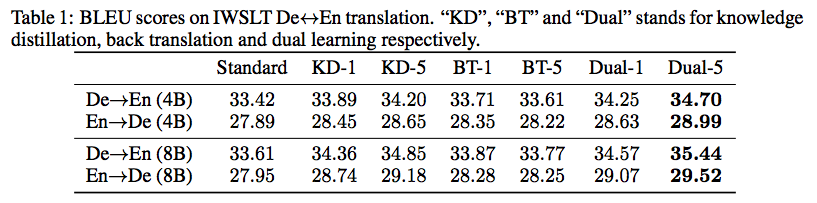

- IWSLT En <-> De

- KD improves BLEU little, BT has no effect, Dual-5 improves BLEU best

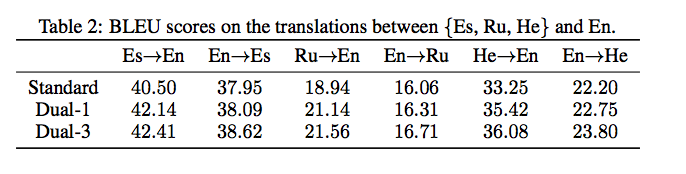

- IWSLT Es, Ru, He -> En

- result is consistent throughout various language pairs in IWSLT

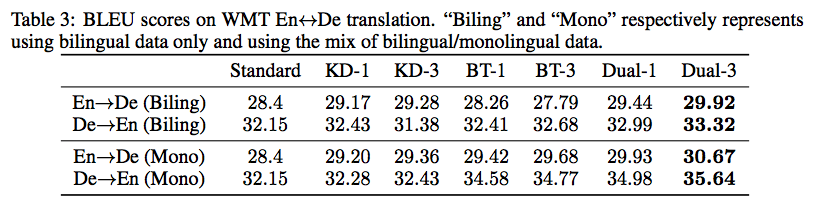

- WMT 2014 En <-> De Bilingual

- KD improves BLEU little, BT has no effect, Dual-5 improves BLEU best (SOTA)

- WMT 2014 En <-> De Monolingual

- also, performs best in unsupervised NMT (SOTA)

Image Translation

- compares Multi-Agent Dual Learning with CycleGAN in image translation, with MADL showing more robust and cleaner image translation

Personal Thoughts

- Multi-Agent pre-trained models provide good initialization point and improve the quality of feedback signal

- Existing dual learning seemed to have only theoretical merit, not practical enough. But this paper uncovers the practical merit as well.

- Seems to work across various languages

Link : https://openreview.net/pdf?id=HyGhN2A5tm

Authors : Anonymous

Abstract

multi-agent dual learningframework to boost performance of neural machine translationX->Y) and dual task (Y->X)Details

Introduction

f : X -> Ymapping and dual model learnsg : Y -> Xmapping.x in X,delta (x, g(f(x)))is the reconstruction loss function used for training signal.fandg, multi agent system usesN - 1additional agents in each side, pre-trained with parallel corpus via different random seed. Ensemble effect boosts the quality of feedback signal.Algorithm

Results

Image Translation

Personal Thoughts

Link : https://openreview.net/pdf?id=HyGhN2A5tm

Authors : Anonymous