diff --git a/CHANGELOG.md b/CHANGELOG.md

index b654c49..24d9439 100644

--- a/CHANGELOG.md

+++ b/CHANGELOG.md

@@ -2,6 +2,76 @@

All notable changes to this project will be documented in this file.

+## [0.6.0] - 2026-02-26

+

+### Breaking changes

+- **Removed `set_backend()`, `get_backend_info()`, `reset_backend()`** — only one backend (C++ native) exists since v0.5.0, so the multi-backend API was dead code. Use `from mcpower.backends import get_backend` if you need the backend instance directly

+- **Removed `set_heterogeneity()` and `set_heteroskedasticity()`** — heterogeneity and heteroskedasticity are now controlled exclusively through scenario configurations (`set_scenario_configs()`). The optimistic scenario uses zero perturbation; realistic/doomer scenarios apply these automatically

+- **Removed dead scipy fallback code** from `distributions.py` — scipy was never a runtime dependency since v0.5.0, so the fallback paths were unreachable dead code. The module now cleanly fails with an `ImportError` if the C++ native backend is missing

+- **`_create_power_plot()` returns `fig`** — the function now accepts a `show=True` parameter and always returns the matplotlib figure object. Set `show=False` to suppress `plt.show()` for programmatic use

+- **`apply()` made private (`_apply()`)** — the method is now `_apply()` and called automatically by `find_power()` / `find_sample_size()`. Direct calls should use `model._apply()` instead

+- **`[all]` extra no longer includes `statsmodels`** — use `pip install mcpower[lme]` to get statsmodels for mixed-effects models

+

+### Added

+- **`test_formula` parameter** on `find_power()` and `find_sample_size()` — test a reduced model against data generated from the full model to evaluate power under model misspecification. For example, generate data with `y = x1 + x2 + x3` but test with `test_formula="y ~ x1 + x2"` to see power when `x3` is omitted. Supports interactions, factors, and mixed models.

+- **C++ non-normal residual generation** — scenario perturbations now generate heavy-tailed (Student-t) and skewed (chi-squared) residuals directly in C++ via `residual_dist`/`residual_df` parameters in `generate_y()`, replacing the Python-side post-hoc perturbation approach. Applies to all model types (OLS and LME)

+- **`optimistic` scenario** is now a first-class entry in `DEFAULT_SCENARIO_CONFIG` with all-zero perturbation values, eliminating the special `scenario_config=None` code path. Custom scenarios inherit from the optimistic baseline, ensuring all required keys exist

+

+### Fixed

+- **`set_variable_type()` docstring listed wrong distribution types** — documented non-existent `"skewed"` type; now lists all supported types: `right_skewed`, `left_skewed`, `high_kurtosis`, `uniform`

+- **`set_scenario_configs()` docstring referenced non-existent keys** — `"effect_size_jitter"` and `"distribution_jitter"` replaced with actual keys (`correlation_noise_sd`, `distribution_change_prob`, etc.)

+- **String factor levels crash in LME variance computation** — `proportions[level - 1]` crashed when factor levels were strings (e.g. `"Japan"`). Now looks up level position in the label list

+- **Division by zero on constant-variance columns** — `upload_data()` normalization produced `inf`/`NaN` when a column had zero variance. Now raises `ValueError` with the column name

+- **Pending state not cleared after `_apply()`** — calling `_apply()` twice could re-apply the same effects. Pending fields are now reset after each `_apply()` call

+- **Parser crash on unbalanced parentheses** — unmatched `)` caused `paren_count` to go negative, producing silent misparses. Now raises `ValueError`

+- **Update checker wrote cache inside installed package** — moved cache file to `~/.cache/mcpower/update_cache.json`

+- **Update checker unbounded response read** — `response.read()` now limited to 1 MB

+- **`scenario_config` dict access on `None`** — added `None` guards for optional scenario configuration lookups

+- **NaN values in uploaded data** — `upload_data()` now rejects data containing NaN values with a clear error message listing affected columns

+- **Formula minus-sign silently dropped terms** — `y = x1 - x2` silently ignored `x2`. Now raises `ValueError` explaining that term removal with `-` is not supported

+- **`_create_table` crash on empty rows** — formatter now handles empty row lists by computing column widths from headers only

+- **`_create_power_plot` crash when `first_achieved` not in sample sizes** — added bounds check before `.index()` call

+- **Redundant `_validate_cluster_sample_size` call** — removed duplicate validation in `find_power()` (already called per-sample-size in `find_sample_size()`)

+

+### Changed

+- **`upload_data()` returns `self`** for method chaining consistency

+- **Assert statements replaced with `RuntimeError`** — internal assertions now raise proper exceptions instead of using `assert`

+- **Removed "(not yet implemented)" from mixed-model docstrings** — mixed model testing has been implemented since v0.4.2

+- **Thread-safe RNG in data generation** — replaced global `np.random.seed()` with local `np.random.RandomState()` for thread safety

+- **Update checker runs in a background thread** — no longer blocks `import mcpower` on slow networks

+- **Module-level deduplication for update checker** — prevents redundant version checks within the same Python session

+- **Removed unused `cluster_column_indices` parameter** from `_lme_analysis_wrapper()` and `_lme_analysis_statsmodels()` — was explicitly marked unused and kept only for API compatibility

+- **Scenario formatters iterate dynamically** — no longer hardcode scenario names, enabling custom scenario display

+

+### Packaging

+- **`tqdm` added as core dependency** (`>=4.60.0`) — used for progress bars

+- **Removed stale pytest warning filter** for `"Mixed-effects models are experimental"` (warning was removed in v0.5.4)

+- **NumPy minimum version relaxed** to `>=1.26.0` (was `>=2.0.0`) in both build-requires and runtime dependencies

+- **`scikit-build-core` bumped** to `>=0.10` (was `>=0.5`)

+- **`statsmodels` added to `[dev]` extras** for test/development convenience

+- **Documentation URL** now points to the GitHub wiki

+- **Changelog URL** added to project URLs

+- **Removed unused pytest markers** (`unit`, `integration`) — only `lme` marker remains

+- **Per-module mypy overrides** replace blanket `ignore_missing_imports`

+

+### Documentation

+- Updated README requirements section: added `tqdm`, specified `NumPy (>=1.26.0)`

+- Changed `pip install mcpower[all]` → `pip install mcpower[lme]` for statsmodels installation

+- Wiki documentation review and cleanup: fixed broken links, corrected API signatures (`set_scenario_configs` parameter name), removed stale `apply()` and `set_heterogeneity()` wiki pages, fixed formula redundancy in Model Specification, corrected Tukey return value docs, added mixed-model caveats

+

+### Technical

+- Removed ~150 lines of dead scipy fallback shims from `distributions.py`

+- Removed `_BACKEND` sentinel variable (only one backend exists)

+- C++ `generate_y()` now accepts `residual_dist` and `residual_df` parameters for non-normal error generation

+- `suppress_output` test fixture now actually suppresses stdout (was a no-op)

+- Removed unused `correlation_matrix_3x3` test fixture

+- Removed empty `tests/mcpower/` artifact directory

+- Added unit tests for `ResultsProcessor` (`test_results.py`)

+- Added unit tests for `normalize_upload_input` (`test_upload_data_utils.py`)

+- Added integration tests for `test_formula` feature (`test_test_formula.py`)

+- Added unit tests for `test_formula_utils` (`test_test_formula_utils.py`)

+- Rewrote optimizer tests to test native backend directly (removed dead scipy fallback tests)

+

## [0.5.4] - 2026-02-22

### Changed

diff --git a/README.md b/README.md

index a230021..2bd003a 100644

--- a/README.md

+++ b/README.md

@@ -21,6 +21,10 @@

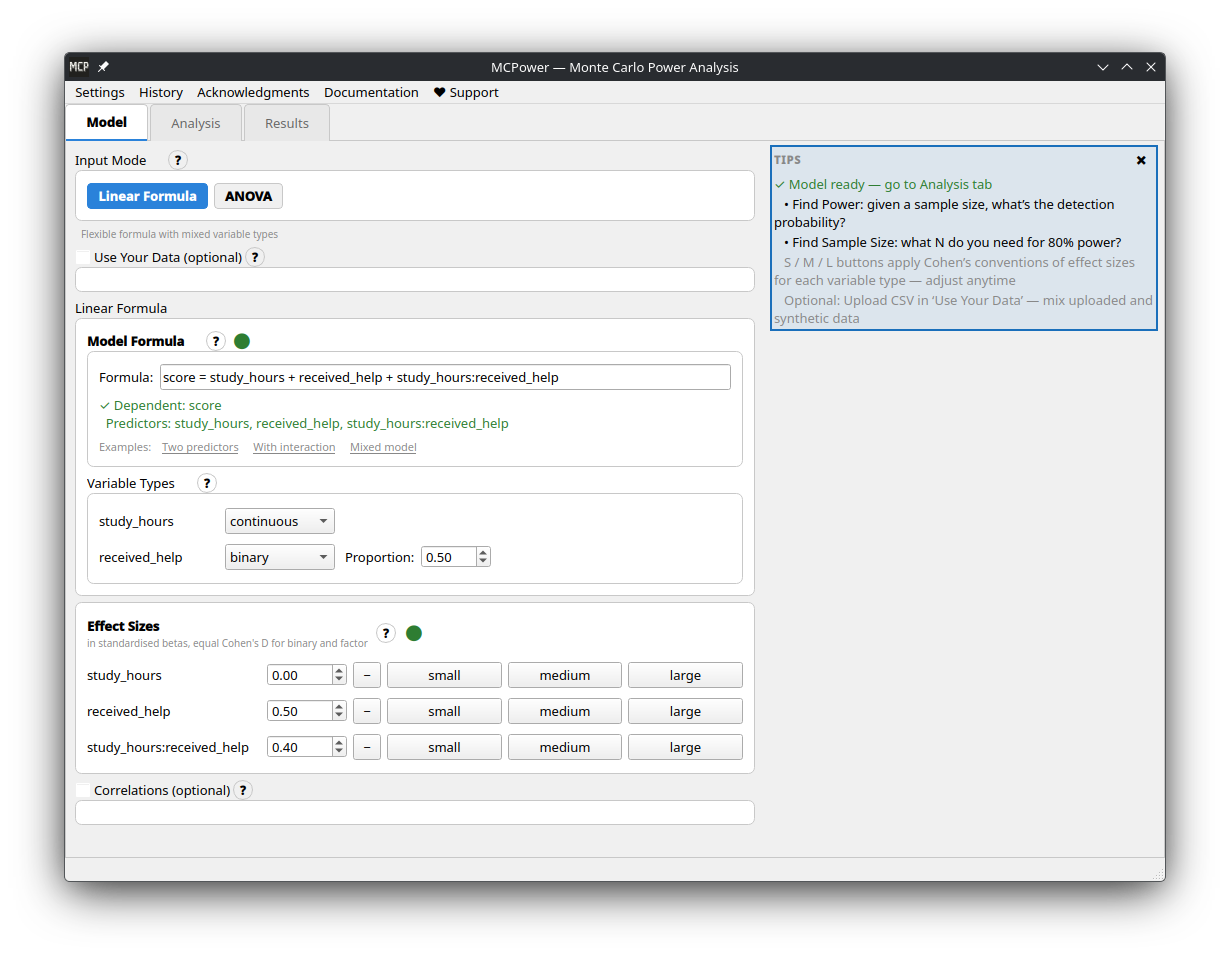

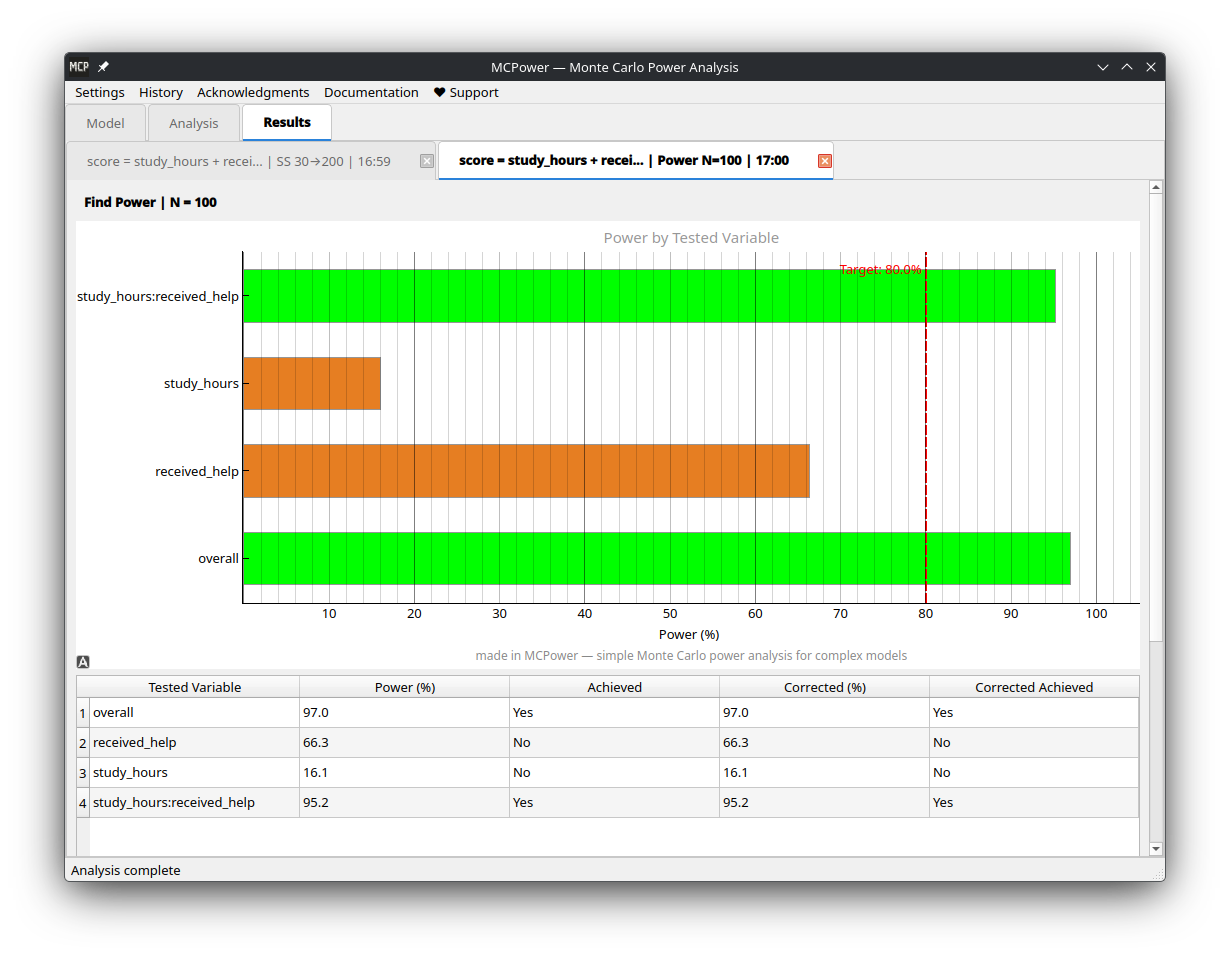

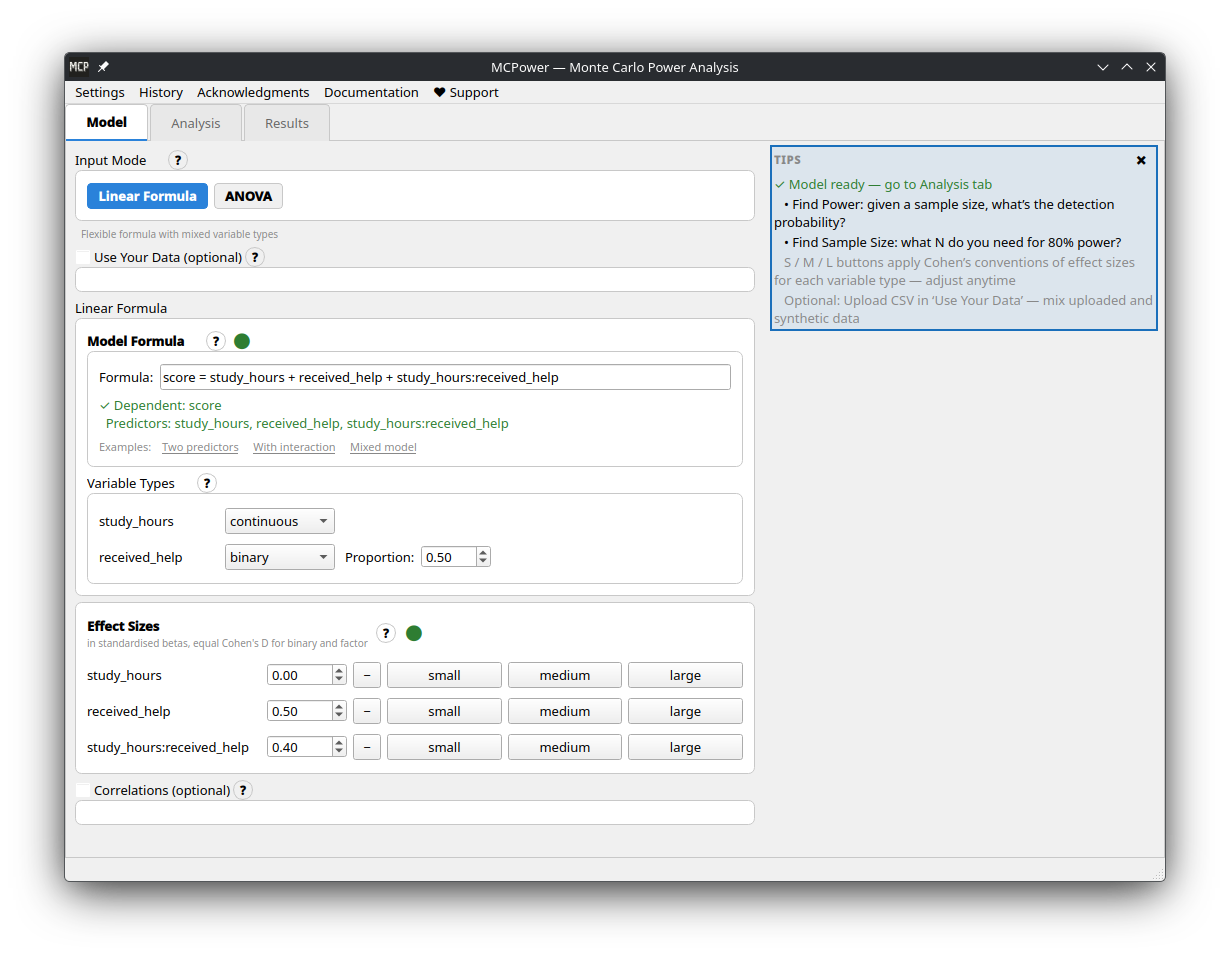

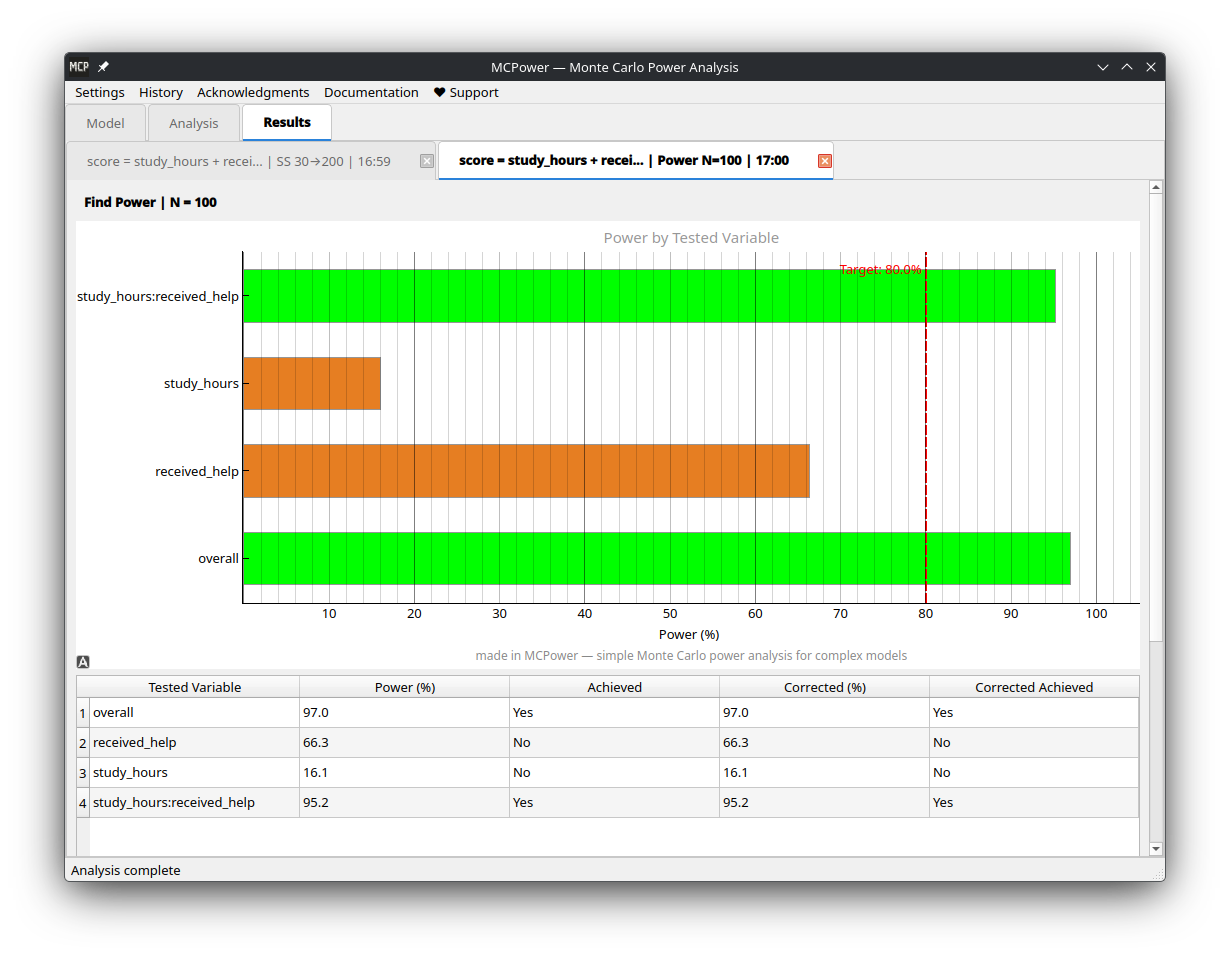

It's a Python package, but prefer a graphical interface? **[MCPower GUI](https://github.com/pawlenartowicz/mcpower-gui)** is a standalone desktop app — no Python installation required. Download ready-to-run executables for Windows, Linux, and macOS from the [releases page](https://github.com/pawlenartowicz/mcpower-gui/releases/latest).

+| Model setup | Results |

+|:---:|:---:|

+|  |

|  |

+

## Why MCPower?

Traditional power formulas break down with interactions, correlated predictors, categorical variables, or non-normal data. MCPower simulates instead — generates thousands of datasets like yours, fits your model, and counts how often the effects are detected.

@@ -297,19 +301,20 @@ model.set_effects("group[2]=0.4, group[3]=0.6, covariate=0.3")

# Use "vs" syntax for pairwise comparisons + correction="tukey"

model.find_power(

sample_size=150,

- target_test="group[0] vs group[1], group[0] vs group[2]",

+ target_test="group[1] vs group[2], group[1] vs group[3]",

correction="tukey"

)

```

### Test Individual Assumption Violations

```python

-# Manually add specific violations (without full scenario analysis)

-model.set_heterogeneity(0.2) # Effect sizes vary between people

-model.set_heteroskedasticity(0.15) # Violation of equal variance assumption

+# Add specific violations via custom scenario configs

+model.set_scenario_configs({

+ "my_test": {"heterogeneity": 0.2, "heteroskedasticity": 0.15}

+})

-# Run with your manual settings (no automatic scenario variations)

-model.find_sample_size(target_test="treatment")

+# Run with scenario variations

+model.find_sample_size(target_test="treatment", scenarios=True)

```

### Mixed-Effects Models

@@ -392,7 +397,7 @@ model.find_power(sample_size=200, progress_callback=False)

| **Factor effects** | **`model.set_effects("var[2]=0.5, var[3]=0.7")`** |

| Correlated predictors | `model.set_correlations("corr(var1, var2)=0.4")` |

| Multiple testing correction | Add `correction="FDR"`, `"Holm"`, `"Bonferroni"`, or `"Tukey"`|

-| Post-hoc pairwise comparison | `target_test="group[0] vs group[1]"` with `correction="tukey"` |

+| Post-hoc pairwise comparison | `target_test="group[1] vs group[2]"` with `correction="tukey"` |

| Mixed model (random intercept) | `MCPower("y ~ x + (1\|group)")` + `model.set_cluster(...)` |

| Random slopes | `MCPower("y ~ x + (1+x\|group)")` + `set_cluster(..., random_slopes=["x"], slope_variance=0.1)` |

| Nested random effects | `MCPower("y ~ x + (1\|A/B)")` + two `set_cluster()` calls |

@@ -424,7 +429,7 @@ model.find_power(sample_size=200, progress_callback=False)

- For simple models where all assumptions are clearly met.

- For large analyses with tens of thousands of observations, tiny effects, or very low alpha levels.

-## What Makes Scenarios Different? (Be careful, unvalidated, preliminary scenarios)

+## What Makes Scenarios Different? (Rule-of-thumb scenarios)

**Traditional power analysis assumes perfect conditions.** MCPower's scenarios add realistic "messiness":

@@ -478,8 +483,8 @@ model.set_variable_type("treatment=(factor,3), education=(factor,4)")

# Set effects for specific levels

model.set_effects("treatment[2]=0.5, treatment[3]=0.7, education[2]=0.3")

-# Or set same effect for all levels of a factor

-model.set_effects("treatment=0.5") # Applies to treatment[2] and treatment[3]

+# Each non-reference level needs its own effect

+model.set_effects("treatment[2]=0.5, treatment[3]=0.7")

# Important: Factors cannot be used in correlations

# This will error: model.set_correlations("corr(treatment, education)=0.3")

@@ -508,12 +513,31 @@ model.set_alpha(0.01) # Stricter significance (p < 0.01)

model.set_simulations(10000) # High precision (slower)

```

+### Model Misspecification Testing

+

+Use `test_formula` to generate data with one model but test with a simpler one -- useful for evaluating the power impact of omitting variables:

+

+```python

+# Generate with 3 predictors, test with 2 (omitting x3)

+model = MCPower("y = x1 + x2 + x3")

+model.set_effects("x1=0.5, x2=0.3, x3=0.2")

+model.find_power(100, test_formula="y = x1 + x2")

+

+# Generate with clusters, test without (ignoring clustering)

+model = MCPower("y ~ treatment + (1|school)")

+model.set_cluster("school", ICC=0.2, n_clusters=20)

+model.set_effects("treatment=0.5")

+model.find_power(1000, test_formula="y ~ treatment")

+```

+

+See the [Test Formula Tutorial](https://github.com/pawlenartowicz/MCPower/wiki/Tutorial-Test-Formula) for details.

+

### Formula Syntax

```python

# These are equivalent:

-"y = x1 + x2 + x1*x2" # Assignment style

-"y ~ x1 + x2 + x1*x2" # R-style formula

-"x1 + x2 + x1*x2" # Predictors only

+"y = x1 + x2 + x1:x2" # Assignment style

+"y ~ x1 + x2 + x1:x2" # R-style formula

+"x1 + x2 + x1:x2" # Predictors only

# Interactions:

"x1*x2" # Main effects + interaction (x1 + x2 + x1:x2)

@@ -538,9 +562,8 @@ model.set_correlations("(x1, x2)=0.3, (x1, x3)=-0.2")

## Requirements

- Python ≥ 3.10

-- NumPy, matplotlib, joblib

+- NumPy (≥1.26.0), matplotlib, joblib, tqdm

- pandas (optional, for DataFrame input — install with `pip install mcpower[pandas]`)

-- statsmodels (optional, for mixed-effects models — install with `pip install mcpower[all]`)

## Documentation

@@ -549,11 +572,11 @@ Full documentation is available on the **[MCPower Wiki](https://github.com/pawle

- [Quick Start](https://github.com/pawlenartowicz/MCPower/wiki/Quick-Start)

- [Model Specification](https://github.com/pawlenartowicz/MCPower/wiki/Model-Specification)

-- [Variable Types](https://github.com/pawlenartowicz/MCPower/wiki/Variable-Types)

-- [Effect Sizes](https://github.com/pawlenartowicz/MCPower/wiki/Effect-Sizes)

-- [Mixed-Effects Models](https://github.com/pawlenartowicz/MCPower/wiki/Mixed-Effects-Models) (random intercepts, slopes, nested effects)

-- [ANOVA & Post-Hoc Tests](https://github.com/pawlenartowicz/MCPower/wiki/ANOVA-and-Post-Hoc-Tests)

-- [Scenario Analysis](https://github.com/pawlenartowicz/MCPower/wiki/Scenario-Analysis)

+- [Variable Types](https://github.com/pawlenartowicz/MCPower/wiki/Concept-Variable-Types)

+- [Effect Sizes](https://github.com/pawlenartowicz/MCPower/wiki/Concept-Effect-Sizes)

+- [Mixed-Effects Models](https://github.com/pawlenartowicz/MCPower/wiki/Concept-Mixed-Effects) (random intercepts, slopes, nested effects)

+- [ANOVA & Post-Hoc Tests](https://github.com/pawlenartowicz/MCPower/wiki/Tutorial-ANOVA-PostHoc)

+- [Scenario Analysis](https://github.com/pawlenartowicz/MCPower/wiki/Concept-Scenario-Analysis)

- [API Reference](https://github.com/pawlenartowicz/MCPower/wiki/API-Reference)

## Need Help?

@@ -568,8 +591,8 @@ Full documentation is available on the **[MCPower Wiki](https://github.com/pawle

- ✅ C++ native backend (pybind11 + Eigen, 3x speedup)

- ✅ Mixed Effects Models (random intercepts, random slopes, nested effects) — [validated against lme4](https://github.com/pawlenartowicz/MCPower/wiki/Concept-LME-Validation)

- 🚧 Logistic Regression (coming soon)

-- 🚧 ANOVA (coming soon)

-- 🚧 Guide about methods, corrections (coming soon)

+- ✅ ANOVA (factor variables as ANOVA, post-hoc pairwise comparisons)

+- ✅ Guide about methods, corrections

- 📋 2 groups comparison with alternative tests

- 📋 Robust regression methods

@@ -578,16 +601,18 @@ Full documentation is available on the **[MCPower Wiki](https://github.com/pawle

GPL v3. If you use MCPower in research, please cite:

-Lenartowicz, P. (2025). MCPower: Monte Carlo Power Analysis for Statistical Models. Zenodo. DOI: 10.5281/zenodo.16502734

+Lenartowicz, P. (2025). MCPower: Monte Carlo Power Analysis for Complex Statistical Models (Version ) [Computer software]. Zenodo. https://doi.org/10.5281/zenodo.16502734

+

+*Replace `` with the version you used — check with `import mcpower; print(mcpower.__version__)`.*

```bibtex

@software{mcpower2025,

- author = {Pawel Lenartowicz},

- title = {MCPower: Monte Carlo Power Analysis for Statistical Models},

- year = {2025},

+ author = {Lenartowicz, Pawe{\l}},

+ title = {{MCPower}: Monte Carlo Power Analysis for Complex Statistical Models},

+ year = {2025},

publisher = {Zenodo},

- doi = {10.5281/zenodo.16502734},

- url = {https://doi.org/10.5281/zenodo.16502734}

+ doi = {10.5281/zenodo.16502734},

+ url = {https://doi.org/10.5281/zenodo.16502734}

}

```

diff --git a/cpp/src/bindings.cpp b/cpp/src/bindings.cpp

index 26fee22..8c02998 100644

--- a/cpp/src/bindings.cpp

+++ b/cpp/src/bindings.cpp

@@ -110,7 +110,9 @@ py::array_t generate_y_wrapper(

py::array_t effects,

double heterogeneity,

double heteroskedasticity,

- int seed

+ int seed,

+ int residual_dist,

+ double residual_df

) {

auto X_buf = X.request();

auto effects_buf = effects.request();

@@ -129,7 +131,8 @@ py::array_t generate_y_wrapper(

);

Eigen::VectorXd y = generate_y(

- X_map, effects_map, heterogeneity, heteroskedasticity, seed

+ X_map, effects_map, heterogeneity, heteroskedasticity, seed,

+ residual_dist, residual_df

);

py::array_t result(n);

@@ -447,7 +450,9 @@ PYBIND11_MODULE(mcpower_native, m) {

py::arg("heterogeneity") = 0.0,

py::arg("heteroskedasticity") = 0.0,

py::arg("seed") = -1,

- "Generate dependent variable with heterogeneity and heteroskedasticity"

+ py::arg("residual_dist") = 0,

+ py::arg("residual_df") = 10.0,

+ "Generate dependent variable with heterogeneity, heteroskedasticity, and non-normal residuals"

);

// LME analysis (q=1 random intercept)

diff --git a/cpp/src/ols.cpp b/cpp/src/ols.cpp

index 7d04ec2..11bd62f 100644

--- a/cpp/src/ols.cpp

+++ b/cpp/src/ols.cpp

@@ -151,19 +151,14 @@ Eigen::VectorXd generate_y(

const Eigen::Ref& effects,

double heterogeneity,

double heteroskedasticity,

- int seed

+ int seed,

+ int residual_dist,

+ double residual_df

) {

const int n = static_cast(X.rows());

const int p = static_cast(X.cols());

- // Set up random generator

std::mt19937 gen;

- if (seed >= 0) {

- gen.seed(static_cast(seed));

- } else {

- std::random_device rd;

- gen.seed(rd());

- }

std::normal_distribution normal(0.0, 1.0);

// Linear predictor with heterogeneity

@@ -176,9 +171,12 @@ Eigen::VectorXd generate_y(

// Heterogeneity: vary effect sizes per observation

linear_pred.setZero();

- // Change seed for heterogeneity noise

+ // Seed at offset +1 for heterogeneity noise

if (seed >= 0) {

gen.seed(static_cast(seed + 1));

+ } else {

+ std::random_device rd;

+ gen.seed(rd());

}

for (int j = 0; j < p; ++j) {

@@ -192,14 +190,43 @@ Eigen::VectorXd generate_y(

}

}

- // Generate errors

+ // Generate errors — seed at offset +2

if (seed >= 0) {

gen.seed(static_cast(seed + 2));

+ } else {

+ std::random_device rd;

+ gen.seed(rd());

}

Eigen::VectorXd error(n);

- for (int i = 0; i < n; ++i) {

- error(i) = normal(gen);

+

+ if (residual_dist == 1) {

+ // Heavy-tailed: Student's t distribution

+ double df = std::max(residual_df, 3.0);

+ std::student_t_distribution t_dist(df);

+ double theoretical_scale = 1.0 / std::sqrt(df / (df - 2.0));

+ for (int i = 0; i < n; ++i) {

+ error(i) = t_dist(gen) * theoretical_scale;

+ }

+ } else if (residual_dist == 2) {

+ // Skewed: chi-squared, centered and scaled

+ double df = std::max(residual_df, 3.0);

+ std::chi_squared_distribution chi2_dist(df);

+ double scale = 1.0 / std::sqrt(2.0 * df);

+ for (int i = 0; i < n; ++i) {

+ error(i) = (chi2_dist(gen) - df) * scale;

+ }

+ } else {

+ // Normal (default)

+ for (int i = 0; i < n; ++i) {

+ error(i) = normal(gen);

+ }

+ }

+

+ // Empirical re-standardization to SD = 1

+ double empirical_sd = std::sqrt(error.array().square().mean());

+ if (empirical_sd > FLOAT_NEAR_ZERO) {

+ error /= empirical_sd;

}

// Apply heteroskedasticity

diff --git a/cpp/src/ols.hpp b/cpp/src/ols.hpp

index ad1f9b6..9e046eb 100644

--- a/cpp/src/ols.hpp

+++ b/cpp/src/ols.hpp

@@ -65,6 +65,8 @@ class OLSAnalyzer {

* @param heterogeneity SD of effect size variation

* @param heteroskedasticity Correlation between predictor and error variance

* @param seed Random seed (-1 for random)

+ * @param residual_dist Error distribution: 0=normal, 1=heavy_tailed (t), 2=skewed (chi2)

+ * @param residual_df Degrees of freedom for non-normal residuals (min clamped to 3)

* @return Response vector (n_samples,)

*/

Eigen::VectorXd generate_y(

@@ -72,7 +74,9 @@ Eigen::VectorXd generate_y(

const Eigen::Ref& effects,

double heterogeneity,

double heteroskedasticity,

- int seed

+ int seed,

+ int residual_dist = 0,

+ double residual_df = 10.0

);

} // namespace mcpower

diff --git a/docs/screenshots/gui-model-setup.png b/docs/screenshots/gui-model-setup.png

new file mode 100644

index 0000000..7f87a53

Binary files /dev/null and b/docs/screenshots/gui-model-setup.png differ

diff --git a/docs/screenshots/gui-results.png b/docs/screenshots/gui-results.png

new file mode 100644

index 0000000..f84152d

Binary files /dev/null and b/docs/screenshots/gui-results.png differ

diff --git a/mcpower/__init__.py b/mcpower/__init__.py

index a52560c..675a4f3 100644

--- a/mcpower/__init__.py

+++ b/mcpower/__init__.py

@@ -16,7 +16,6 @@

from importlib.metadata import version as _get_version

-from .backends import get_backend_info, set_backend

from .model import MCPower

from .progress import PrintReporter, ProgressReporter, SimulationCancelled, TqdmReporter

@@ -27,14 +26,14 @@

__all__ = [

"MCPower",

"SimulationCancelled",

- "set_backend",

- "get_backend_info",

"ProgressReporter",

"PrintReporter",

"TqdmReporter",

]

+import threading as _threading

+

from .utils.updates import _check_for_updates

-_check_for_updates(__version__)

+_threading.Thread(target=_check_for_updates, args=(__version__,), daemon=True).start()

diff --git a/mcpower/backends/__init__.py b/mcpower/backends/__init__.py

index 7bb03f8..8b24a73 100644

--- a/mcpower/backends/__init__.py

+++ b/mcpower/backends/__init__.py

@@ -3,11 +3,9 @@

This module provides a unified interface for compute backends.

The only supported backend is native C++ (compiled via pybind11).

-

-Users can override via set_backend('c++' | 'default') or pass a ComputeBackend instance.

"""

-from typing import Optional, Protocol, Union, runtime_checkable

+from typing import Optional, Protocol, runtime_checkable

import numpy as np

@@ -24,6 +22,7 @@ def ols_analysis(

f_crit: float,

t_crit: float,

correction_t_crits: np.ndarray,

+ # correction_method encoding: 0=none, 1=Bonferroni, 2=FDR (BH), 3=Holm

correction_method: int,

) -> np.ndarray:

"""Run OLS regression and return significance flags.

@@ -40,9 +39,15 @@ def generate_y(

heterogeneity: float,

heteroskedasticity: float,

seed: int,

+ residual_dist: int = 0,

+ residual_df: float = 10.0,

) -> np.ndarray:

"""Generate the dependent variable ``y = X @ effects + error``.

+ Args:

+ residual_dist: Error distribution (0=normal, 1=heavy_tailed, 2=skewed).

+ residual_df: Degrees of freedom for non-normal residuals.

+

Returns:

1-D array of length ``n_samples``.

"""

@@ -88,12 +93,8 @@ def lme_analysis(

...

-# Valid backend names for set_backend()

-_BACKEND_NAMES = {"default", "c++"}

-

# Global backend instance

_backend_instance: Optional[ComputeBackend] = None

-_backend_forced = False

def get_backend() -> ComputeBackend:

@@ -101,7 +102,7 @@ def get_backend() -> ComputeBackend:

Get the active compute backend.

On first call, instantiates the C++ native backend.

- Subsequent calls return the cached instance unless reset_backend() is called.

+ Subsequent calls return the cached instance.

Raises:

ImportError: If the C++ extension is not compiled/installed.

@@ -117,64 +118,7 @@ def get_backend() -> ComputeBackend:

return _backend_instance

-def set_backend(backend: Union[str, ComputeBackend]) -> None:

- """

- Set the compute backend.

-

- Args:

- backend: One of:

- - 'default' -- use native C++ backend

- - 'c++' -- force native C++ backend

- - A ComputeBackend instance

-

- Raises:

- ImportError: If the C++ backend is not available.

- ValueError: If the string is not recognized.

- """

- global _backend_instance, _backend_forced

-

- if isinstance(backend, str):

- name = backend.lower().strip()

- if name not in _BACKEND_NAMES:

- raise ValueError(f"Unknown backend {backend!r}. Choose from: {', '.join(sorted(_BACKEND_NAMES))}")

-

- from .native import NativeBackend

-

- _backend_instance = NativeBackend()

- _backend_forced = name != "default"

- else:

- _backend_instance = backend

- _backend_forced = True

-

-

-def reset_backend() -> None:

- """Reset backend to automatic selection."""

- global _backend_instance, _backend_forced

- _backend_instance = None

- _backend_forced = False

-

-

-def get_backend_info() -> dict:

- """

- Get information about the current backend.

-

- Returns:

- Dictionary with backend name, type, and whether it was forced.

- """

- backend = get_backend()

- name = type(backend).__name__

- return {

- "name": name,

- "is_native": name == "NativeBackend",

- "module": type(backend).__module__,

- "forced": _backend_forced,

- }

-

-

__all__ = [

"ComputeBackend",

"get_backend",

- "set_backend",

- "reset_backend",

- "get_backend_info",

]

diff --git a/mcpower/backends/native.py b/mcpower/backends/native.py

index acd7633..2338cfe 100644

--- a/mcpower/backends/native.py

+++ b/mcpower/backends/native.py

@@ -17,6 +17,11 @@

mcpower_native = None

+def _prep(arr: np.ndarray, dtype=np.float64) -> np.ndarray:

+ """Ensure array is contiguous with the expected dtype for C++ interop."""

+ return np.ascontiguousarray(arr, dtype=dtype)

+

+

class NativeBackend:

"""

C++ compute backend using pybind11 bindings.

@@ -46,8 +51,8 @@ def _initialize_tables(self) -> None:

t3_ppf = manager.load_t3_ppf_table()

# Ensure correct dtypes

- norm_cdf = np.ascontiguousarray(norm_cdf.astype(np.float64))

- t3_ppf = np.ascontiguousarray(t3_ppf.astype(np.float64))

+ norm_cdf = _prep(norm_cdf)

+ t3_ppf = _prep(t3_ppf)

# Initialize C++ tables (generation tables only)

mcpower_native.init_tables(norm_cdf, t3_ppf)

@@ -77,10 +82,10 @@ def ols_analysis(

Returns:

Array: [f_sig, uncorrected..., corrected...]

"""

- X = np.ascontiguousarray(X, dtype=np.float64)

- y = np.ascontiguousarray(y, dtype=np.float64)

- target_indices = np.ascontiguousarray(target_indices, dtype=np.int32)

- correction_t_crits = np.ascontiguousarray(correction_t_crits, dtype=np.float64)

+ X = _prep(X)

+ y = _prep(y)

+ target_indices = _prep(target_indices, np.int32)

+ correction_t_crits = _prep(correction_t_crits)

return mcpower_native.ols_analysis(X, y, target_indices, f_crit, t_crit, correction_t_crits, correction_method) # type: ignore[no-any-return]

@@ -91,6 +96,8 @@ def generate_y(

heterogeneity: float,

heteroskedasticity: float,

seed: int,

+ residual_dist: int = 0,

+ residual_df: float = 10.0,

) -> np.ndarray:

"""

Generate dependent variable.

@@ -101,14 +108,16 @@ def generate_y(

heterogeneity: Effect size variation SD

heteroskedasticity: Error-predictor correlation

seed: Random seed (-1 for random)

+ residual_dist: Error distribution (0=normal, 1=heavy_tailed, 2=skewed)

+ residual_df: Degrees of freedom for non-normal residuals

Returns:

Response vector (n_samples,)

"""

- X = np.ascontiguousarray(X, dtype=np.float64)

- effects = np.ascontiguousarray(effects, dtype=np.float64)

+ X = _prep(X)

+ effects = _prep(effects)

- return mcpower_native.generate_y(X, effects, heterogeneity, heteroskedasticity, seed) # type: ignore[no-any-return]

+ return mcpower_native.generate_y(X, effects, heterogeneity, heteroskedasticity, seed, residual_dist, residual_df) # type: ignore[no-any-return]

def generate_X(

self,

@@ -137,11 +146,11 @@ def generate_X(

Returns:

Design matrix (n_samples, n_vars)

"""

- correlation_matrix = np.ascontiguousarray(correlation_matrix, dtype=np.float64)

- var_types = np.ascontiguousarray(var_types, dtype=np.int32)

- var_params = np.ascontiguousarray(var_params, dtype=np.float64)

- upload_normal = np.ascontiguousarray(upload_normal, dtype=np.float64)

- upload_data = np.ascontiguousarray(upload_data, dtype=np.float64)

+ correlation_matrix = _prep(correlation_matrix)

+ var_types = _prep(var_types, np.int32)

+ var_params = _prep(var_params)

+ upload_normal = _prep(upload_normal)

+ upload_data = _prep(upload_data)

return mcpower_native.generate_X( # type: ignore[no-any-return]

n_samples,

@@ -185,12 +194,15 @@ def lme_analysis(

Returns:

Array: [f_sig, uncorrected..., corrected..., wald_flag]

or empty array on failure

+

+ wald_flag: 1.0 if the Wald test was used as fallback for the overall

+ significance test (instead of the likelihood ratio test), 0.0 otherwise.

"""

- X = np.ascontiguousarray(X, dtype=np.float64)

- y = np.ascontiguousarray(y, dtype=np.float64)

- cluster_ids = np.ascontiguousarray(cluster_ids, dtype=np.int32)

- target_indices = np.ascontiguousarray(target_indices, dtype=np.int32)

- correction_z_crits = np.ascontiguousarray(correction_z_crits, dtype=np.float64)

+ X = _prep(X)

+ y = _prep(y)

+ cluster_ids = _prep(cluster_ids, np.int32)

+ target_indices = _prep(target_indices, np.int32)

+ correction_z_crits = _prep(correction_z_crits)

return mcpower_native.lme_analysis( # type: ignore[no-any-return]

X,

@@ -240,14 +252,17 @@ def lme_analysis_general(

Returns:

Array: [f_sig, uncorrected..., corrected..., wald_flag]

or empty array on failure

+

+ wald_flag: 1.0 if the Wald test was used as fallback for the overall

+ significance test (instead of the likelihood ratio test), 0.0 otherwise.

"""

- X = np.ascontiguousarray(X, dtype=np.float64)

- y = np.ascontiguousarray(y, dtype=np.float64)

- Z = np.ascontiguousarray(Z, dtype=np.float64)

- cluster_ids = np.ascontiguousarray(cluster_ids, dtype=np.int32)

- target_indices = np.ascontiguousarray(target_indices, dtype=np.int32)

- correction_z_crits = np.ascontiguousarray(correction_z_crits, dtype=np.float64)

- warm_theta = np.ascontiguousarray(warm_theta, dtype=np.float64)

+ X = _prep(X)

+ y = _prep(y)

+ Z = _prep(Z)

+ cluster_ids = _prep(cluster_ids, np.int32)

+ target_indices = _prep(target_indices, np.int32)

+ correction_z_crits = _prep(correction_z_crits)

+ warm_theta = _prep(warm_theta)

return mcpower_native.lme_analysis_general( # type: ignore[no-any-return]

X,

@@ -301,15 +316,18 @@ def lme_analysis_nested(

Returns:

Array: [f_sig, uncorrected..., corrected..., wald_flag]

or empty array on failure

+

+ wald_flag: 1.0 if the Wald test was used as fallback for the overall

+ significance test (instead of the likelihood ratio test), 0.0 otherwise.

"""

- X = np.ascontiguousarray(X, dtype=np.float64)

- y = np.ascontiguousarray(y, dtype=np.float64)

- parent_ids = np.ascontiguousarray(parent_ids, dtype=np.int32)

- child_ids = np.ascontiguousarray(child_ids, dtype=np.int32)

- child_to_parent = np.ascontiguousarray(child_to_parent, dtype=np.int32)

- target_indices = np.ascontiguousarray(target_indices, dtype=np.int32)

- correction_z_crits = np.ascontiguousarray(correction_z_crits, dtype=np.float64)

- warm_theta = np.ascontiguousarray(warm_theta, dtype=np.float64)

+ X = _prep(X)

+ y = _prep(y)

+ parent_ids = _prep(parent_ids, np.int32)

+ child_ids = _prep(child_ids, np.int32)

+ child_to_parent = _prep(child_to_parent, np.int32)

+ target_indices = _prep(target_indices, np.int32)

+ correction_z_crits = _prep(correction_z_crits)

+ warm_theta = _prep(warm_theta)

return mcpower_native.lme_analysis_nested( # type: ignore[no-any-return]

X,

diff --git a/mcpower/core/results.py b/mcpower/core/results.py

index 45b178a..dfe6b77 100644

--- a/mcpower/core/results.py

+++ b/mcpower/core/results.py

@@ -54,6 +54,7 @@ def calculate_powers(

# Individual powers

individual_powers = {}

individual_powers_corrected = {}

+ non_overall_tests = [t for t in target_tests if t != "overall"]

for test in target_tests:

if test == "overall":

@@ -62,7 +63,6 @@ def calculate_powers(

individual_powers_corrected[test] = np.mean(results_corrected_array[:, 0]) * 100

else:

# Find position among non-'overall' tests and add 1 for F-test offset

- non_overall_tests = [t for t in target_tests if t != "overall"]

pos = non_overall_tests.index(test)

col_idx = pos + 1 # +1 because column 0 is F-test

individual_powers[test] = np.mean(results_array[:, col_idx]) * 100

diff --git a/mcpower/core/scenarios.py b/mcpower/core/scenarios.py

index 454f8e3..2d2dd01 100644

--- a/mcpower/core/scenarios.py

+++ b/mcpower/core/scenarios.py

@@ -13,35 +13,51 @@

from ..utils.visualization import _create_power_plot

# Default scenario configurations.

+# "optimistic" is the zero-perturbation baseline — also used as the default

+# scenario_config when scenarios=False and as a template for custom scenarios

+# (ensures all required keys exist).

# "realistic" introduces moderate assumption violations; "doomer" introduces

# severe violations. Each simulation iteration draws random perturbations

# from these parameters (correlation noise, distribution swaps, etc.).

DEFAULT_SCENARIO_CONFIG = {

+ "optimistic": {

+ "heterogeneity": 0.0,

+ "heteroskedasticity": 0.0,

+ "correlation_noise_sd": 0.0,

+ "distribution_change_prob": 0.0,

+ "new_distributions": ["right_skewed", "left_skewed", "uniform"],

+ # Mixed model perturbations (only consumed when cluster_specs present)

+ "random_effect_dist": "normal",

+ "random_effect_df": 5,

+ "icc_noise_sd": 0.0,

+ # Residual distribution perturbations (all model types)

+ "residual_dists": ["heavy_tailed", "skewed"],

+ "residual_change_prob": 0.0,

+ "residual_df": 10,

+ },

"realistic": {

"heterogeneity": 0.2,

- "heteroskedasticity": 0.1,

- "correlation_noise_sd": 0.2,

- "distribution_change_prob": 0.3,

+ "heteroskedasticity": 0.15,

+ "correlation_noise_sd": 0.15,

+ "distribution_change_prob": 0.5,

"new_distributions": ["right_skewed", "left_skewed", "uniform"],

- # LME-specific keys (only consumed when cluster_specs present)

"random_effect_dist": "heavy_tailed",

- "random_effect_df": 5,

+ "random_effect_df": 10,

"icc_noise_sd": 0.15,

- "residual_dist": "heavy_tailed",

- "residual_change_prob": 0.3,

- "residual_df": 10,

+ "residual_dists": ["heavy_tailed", "skewed"],

+ "residual_change_prob": 0.5,

+ "residual_df": 8,

},

"doomer": {

"heterogeneity": 0.4,

- "heteroskedasticity": 0.2,

- "correlation_noise_sd": 0.4,

- "distribution_change_prob": 0.6,

+ "heteroskedasticity": 0.35,

+ "correlation_noise_sd": 0.30,

+ "distribution_change_prob": 0.8,

"new_distributions": ["right_skewed", "left_skewed", "uniform"],

- # LME-specific keys (only consumed when cluster_specs present)

"random_effect_dist": "heavy_tailed",

- "random_effect_df": 3,

+ "random_effect_df": 5,

"icc_noise_sd": 0.30,

- "residual_dist": "heavy_tailed",

+ "residual_dists": ["heavy_tailed", "skewed"],

"residual_change_prob": 0.8,

"residual_df": 5,

},

@@ -111,15 +127,7 @@ def run_power_analysis(

if progress is not None:

progress.start()

- # Optimistic (user's original settings)

- results["optimistic"] = run_find_power_func(

- sample_size=sample_size,

- target_tests=target_tests,

- correction=correction,

- scenario_config=None,

- )

-

- # Realistic & Doomer scenarios

+ # Run all scenarios (optimistic is always present as zero-perturbation baseline)

for scenario_name, config in self.configs.items():

results[scenario_name] = run_find_power_func(

sample_size=sample_size,

@@ -175,15 +183,7 @@ def run_sample_size_analysis(

if progress is not None:

progress.start()

- # Optimistic

- results["optimistic"] = run_sample_size_func(

- sample_sizes=sample_sizes,

- target_tests=target_tests,

- correction=correction,

- scenario_config=None,

- )

-

- # Other scenarios

+ # Run all scenarios (optimistic is always present as zero-perturbation baseline)

for scenario_name, config in self.configs.items():

results[scenario_name] = run_sample_size_func(

sample_sizes=sample_sizes,

@@ -209,8 +209,9 @@ def run_sample_size_analysis(

def _create_scenario_plots(self, results: Dict) -> None:

"""Create visualizations for scenario analysis."""

scenarios = results["scenarios"]

- scenario_names = ["optimistic", "realistic", "doomer"]

- scenario_labels = ["Optimistic", "Realistic", "Doomer"]

+ # Derive scenario order from results: optimistic first, then config keys

+ scenario_names = ["optimistic"] + [k for k in scenarios if k != "optimistic"]

+ scenario_labels = [name.title() for name in scenario_names]

first_scenario = scenarios.get("optimistic", {})

if "results" not in first_scenario or "sample_sizes_tested" not in first_scenario["results"]:

@@ -286,7 +287,7 @@ def apply_lme_perturbations(

if icc_noise_sd == 0.0 and re_dist == "normal":

return None

- rng = np.random.RandomState(sim_seed + 5000 if sim_seed is not None else None)

+ rng = np.random.RandomState(sim_seed + 6 if sim_seed is not None else None)

# ICC jitter: multiplicative noise on tau_squared per grouping variable

tau_squared_multipliers: Dict[str, float] = {}

@@ -304,70 +305,6 @@ def apply_lme_perturbations(

}

-def apply_lme_residual_perturbations(

- y: np.ndarray,

- scenario_config: Dict,

- sim_seed: Optional[int],

-) -> np.ndarray:

- """Replace normal residuals with non-normal if coin flip succeeds.

-

- For each simulation, independently flips a coin (probability

- ``residual_change_prob``) to decide whether residuals are replaced.

- If activated, reproduces the original N(0,1) errors via the known

- seed, generates replacements from t(df) or shifted χ², and applies

- the correction ``y += (new_error - original_error)``.

-

- Args:

- y: Dependent variable array (modified in-place).

- scenario_config: Scenario parameters with residual keys.

- sim_seed: Random seed for reproducibility.

-

- Returns:

- The (possibly modified) dependent variable array.

- """

- residual_dist = scenario_config.get("residual_dist", "normal")

- residual_change_prob = scenario_config.get("residual_change_prob", 0.0)

- residual_df = scenario_config.get("residual_df", 10)

-

- if residual_dist == "normal" or residual_change_prob <= 0.0:

- return y

-

- rng = np.random.RandomState(sim_seed + 6000 if sim_seed is not None else None)

-

- # Coin flip: should this simulation have non-normal residuals?

- if rng.random() > residual_change_prob:

- return y

-

- n = len(y)

-

- # Reproduce the original N(0,1) errors using the same seed as generate_y

- # generate_y uses sim_seed + 2 for error generation

- original_rng = np.random.RandomState(sim_seed + 2 if sim_seed is not None else None)

- original_errors = original_rng.standard_normal(n)

-

- # Generate replacement errors

- replacement_rng = np.random.RandomState(sim_seed + 6001 if sim_seed is not None else None)

-

- if residual_dist == "heavy_tailed":

- # t(df) scaled to have variance 1

- df = max(residual_df, 3)

- raw = replacement_rng.standard_t(df, size=n)

- # t(df) has variance df/(df-2), scale to unit variance

- scale = 1.0 / np.sqrt(df / (df - 2))

- new_errors = raw * scale

- elif residual_dist == "skewed":

- # Shifted chi-squared: mean=0, variance=1

- df = max(residual_df, 3)

- raw = replacement_rng.chisquare(df, size=n)

- new_errors = (raw - df) / np.sqrt(2 * df)

- else:

- return y

-

- # Apply correction: swap out original errors for new ones

- y = y + (new_errors - original_errors)

- return y

-

-

def apply_per_simulation_perturbations(

correlation_matrix: np.ndarray,

var_types: np.ndarray,

@@ -393,19 +330,22 @@ def apply_per_simulation_perturbations(

if scenario_config is None:

return correlation_matrix, var_types

- rng = np.random.RandomState(sim_seed)

+ rng = np.random.RandomState(sim_seed + 5 if sim_seed is not None else None)

# Perturb correlation matrix

perturbed_corr = correlation_matrix

- if correlation_matrix is not None and scenario_config["correlation_noise_sd"] > 0:

+ if correlation_matrix is not None and scenario_config.get("correlation_noise_sd", 0) > 0:

perturbed_corr = correlation_matrix.copy()

noise = rng.normal(0, scenario_config["correlation_noise_sd"], correlation_matrix.shape)

noise = (noise + noise.T) / 2 # Keep symmetric

perturbed_corr += noise

+ # Clip off-diagonal correlations to [-0.8, 0.8] to prevent near-singular

+ # matrices that cause Cholesky decomposition failures in data generation.

perturbed_corr = np.clip(perturbed_corr, -0.8, 0.8)

np.fill_diagonal(perturbed_corr, 1.0)

- # Ensure positive semi-definiteness via eigenvalue clipping

+ # Nearest correlation matrix repair via spectral clipping: set negative

+ # eigenvalues to zero and reconstruct, then re-normalize to unit diagonal.

eigvals, eigvecs = np.linalg.eigh(perturbed_corr)

if np.any(eigvals < 0):

eigvals = np.maximum(eigvals, 0.0)

@@ -417,7 +357,7 @@ def apply_per_simulation_perturbations(

# Perturb variable types

perturbed_var_types = var_types.copy()

- if scenario_config["distribution_change_prob"] > 0:

+ if scenario_config.get("distribution_change_prob", 0) > 0:

type_mapping = {"right_skewed": 2, "left_skewed": 3, "uniform": 5}

new_type_codes = [type_mapping[distribution] for distribution in scenario_config["new_distributions"]]

diff --git a/mcpower/core/simulation.py b/mcpower/core/simulation.py

index 266a39e..2223324 100644

--- a/mcpower/core/simulation.py

+++ b/mcpower/core/simulation.py

@@ -61,7 +61,7 @@ def __init__(

Args:

n_simulations: Number of Monte Carlo iterations.

seed: Base random seed. Each iteration uses

- ``seed + 4 * sim_id``.

+ ``seed + 12 * sim_id``.

alpha: Significance level for hypothesis tests.

parallel: Parallel processing mode (unused inside the

runner itself; parallelism is handled at the

@@ -143,12 +143,19 @@ def run_power_simulations(

if metadata.cluster_specs:

from ..stats.lme_solver import compute_lme_critical_values

- n_fixed = len(metadata.target_indices)

- # n_fixed_effects = number of columns in X_expanded (excluding intercept)

- # This equals the total effect count minus cluster effects

- n_fixed_total = len(metadata.effect_sizes)

- if metadata.cluster_effect_indices:

- n_fixed_total -= len(metadata.cluster_effect_indices)

+ # Use test formula dimensions when subsetting with random effects

+ if metadata.test_column_indices is not None and metadata.test_has_random_effects:

+ if metadata.test_target_indices is None:

+ raise RuntimeError("test_target_indices must be set when test_column_indices is present")

+ n_fixed = len(metadata.test_target_indices)

+ n_fixed_total = metadata.test_effect_count

+ else:

+ n_fixed = len(metadata.target_indices)

+ # n_fixed_effects = number of columns in X_expanded (excluding intercept)

+ # This equals the total effect count minus cluster effects

+ n_fixed_total = len(metadata.effect_sizes)

+ if metadata.cluster_effect_indices:

+ n_fixed_total -= len(metadata.cluster_effect_indices)

chi2_crit, z_crit, correction_z_crits = compute_lme_critical_values(

self.alpha, n_fixed_total, n_fixed, metadata.correction_method

)

@@ -162,19 +169,18 @@ def run_power_simulations(

raise SimulationCancelled("Simulation cancelled by user")

- sim_seed = self.seed + 4 * sim_id if self.seed is not None else None

-

- # Apply perturbations if in scenario mode

- if scenario_config is not None and apply_perturbations_func is not None:

- perturbed_corr, perturbed_types = apply_perturbations_func(

- metadata.correlation_matrix,

- metadata.var_types,

- scenario_config,

- sim_seed,

- )

- else:

- perturbed_corr = metadata.correlation_matrix

- perturbed_types = metadata.var_types

+ sim_seed = self.seed + 12 * sim_id if self.seed is not None else None

+

+ # Apply per-simulation perturbations (correlation noise, distribution swaps)

+ # Zero-valued params in optimistic scenario are no-ops

+ if apply_perturbations_func is None:

+ raise RuntimeError("apply_perturbations_func must be provided")

+ perturbed_corr, perturbed_types = apply_perturbations_func(

+ metadata.correlation_matrix,

+ metadata.var_types,

+ scenario_config,

+ sim_seed,

+ )

result = self._single_simulation(

sim_id=sim_id,

@@ -326,7 +332,9 @@ def _single_simulation(

first_spec = next(iter(metadata.cluster_specs.values()))

sample_size = first_spec["n_clusters"] * first_spec["cluster_size"]

- # Check if strict mode with uploaded data

+ # Strict-mode bootstrap: resample whole rows from uploaded data to

+ # preserve exact inter-variable relationships, then generate y from

+ # the bootstrapped X. This bypasses the normal X-generation pipeline.

if metadata.preserve_correlation == "strict" and metadata.uploaded_raw_data is not None:

# Strict mode: bootstrap uploaded data + generate created variables separately

from ..stats.data_generation import bootstrap_uploaded_data

@@ -336,7 +344,7 @@ def _single_simulation(

sample_size,

metadata.uploaded_raw_data,

metadata.uploaded_var_metadata,

- sim_seed,

+ sim_seed + 3 if sim_seed is not None else None,

)

# Merge uploaded and created non-factor variables

@@ -367,7 +375,7 @@ def _single_simulation(

X_factors = X_uploaded_factors

else:

# Mixed: generate all factors, replace uploaded factor columns

- X_factors = _generate_factors(sample_size, metadata.factor_specs, sim_seed)

+ X_factors = _generate_factors(sample_size, metadata.factor_specs, sim_seed + 3 if sim_seed is not None else None)

# Overwrite uploaded factor dummy columns with bootstrapped data

if X_uploaded_factors.shape[1] > 0:

col_offset = 0

@@ -400,14 +408,14 @@ def _single_simulation(

X_non_factors = np.empty((sample_size, 0), dtype=float)

# Generate factor variables (as dummy variables)

- X_factors = _generate_factors(sample_size, metadata.factor_specs, sim_seed)

+ X_factors = _generate_factors(sample_size, metadata.factor_specs, sim_seed + 3 if sim_seed is not None else None)

# Compute LME perturbations (ICC jitter, non-normal RE dist)

lme_perturbations = None

- if metadata.cluster_specs and scenario_config is not None:

+ if metadata.cluster_specs:

from ..core.scenarios import apply_lme_perturbations

- lme_perturbations = apply_lme_perturbations(metadata.cluster_specs, scenario_config, sim_seed)

+ lme_perturbations = apply_lme_perturbations(metadata.cluster_specs, scenario_config or {}, sim_seed)

# Generate cluster random effects (independent of upload mode)

re_result = None # Phase 2: random effects result for slopes/nesting

@@ -448,6 +456,16 @@ def _single_simulation(

# Create extended design matrix with interactions (excludes cluster effects)

X_expanded = create_X_extended_func(X)

+ # Test formula column subsetting: use reduced design matrix for analysis

+ if metadata.test_column_indices is not None:

+ X_test = X_expanded[:, metadata.test_column_indices]

+ if metadata.test_target_indices is None:

+ raise RuntimeError("test_target_indices must be set when test_column_indices is present")

+ test_target_indices = metadata.test_target_indices

+ else:

+ X_test = X_expanded

+ test_target_indices = metadata.target_indices

+

# Split effect sizes: fixed effects vs cluster effects

# Use precomputed values (Phase 2 optimization)

if metadata.cluster_effect_indices:

@@ -457,6 +475,21 @@ def _single_simulation(

fixed_effect_sizes = metadata.fixed_effect_sizes_cached

cluster_effect_sizes = None

+ # Residual coin flip: decide whether this simulation uses non-normal errors

+ residual_dist = 0 # normal

+ residual_df = 10.0

+ residual_change_prob = scenario_config.get("residual_change_prob", 0.0) if scenario_config else 0.0

+ if residual_change_prob > 0:

+ if scenario_config is None:

+ raise RuntimeError("scenario_config must be provided when residual_change_prob > 0")

+ coin_rng = np.random.RandomState(sim_seed + 7 if sim_seed is not None else None)

+ if coin_rng.random() < residual_change_prob:

+ residual_dists = scenario_config.get("residual_dists", ["heavy_tailed", "skewed"])

+ picked = coin_rng.choice(residual_dists)

+ dist_map = {"heavy_tailed": 1, "skewed": 2}

+ residual_dist = dist_map.get(picked, 0)

+ residual_df = float(scenario_config.get("residual_df", 10))

+

# Generate dependent variable with fixed effects only

y = generate_y_func(

X_expanded=X_expanded,

@@ -464,6 +497,8 @@ def _single_simulation(

heterogeneity=metadata.heterogeneity,

heteroskedasticity=metadata.heteroskedasticity,

sim_seed=sim_seed,

+ residual_dist=residual_dist,

+ residual_df=residual_df,

)

# Add cluster random effects contribution

@@ -478,12 +513,6 @@ def _single_simulation(

if re_result is not None and not np.allclose(re_result.slope_contribution, 0):

y = y + re_result.slope_contribution

- # Apply LME residual perturbations (non-normal residuals)

- if metadata.cluster_specs and scenario_config is not None:

- from ..core.scenarios import apply_lme_residual_perturbations

-

- y = apply_lme_residual_perturbations(y, scenario_config, sim_seed)

-

# Determine cluster IDs for the solver

cluster_ids: Optional[np.ndarray]

if re_result is not None:

@@ -496,23 +525,25 @@ def _single_simulation(

cluster_ids = metadata.cluster_ids_template

# Route to correct analysis method

- if cluster_ids is not None:

+ # When test_formula specifies no random effects, use OLS even if generation has clusters

+ use_lme = cluster_ids is not None and not (metadata.test_column_indices is not None and not metadata.test_has_random_effects)

+ if use_lme:

# Mixed model path (LME)

from ..stats.mixed_models import _lme_analysis_wrapper

+ assert cluster_ids is not None # narrowed by use_lme guard above

lme_result = _lme_analysis_wrapper(

- X_expanded,

+ X_test,

y,

- metadata.target_indices,

+ test_target_indices,

cluster_ids,

- metadata.cluster_column_indices,

metadata.correction_method,

self.alpha,

backend="custom",

verbose=metadata.verbose,

- chi2_crit=getattr(metadata, "lme_chi2_crit", None),

- z_crit=getattr(metadata, "lme_z_crit", None),

- correction_z_crits=getattr(metadata, "lme_correction_z_crits", None),

+ chi2_crit=metadata.lme_chi2_crit,

+ z_crit=metadata.lme_z_crit,

+ correction_z_crits=metadata.lme_correction_z_crits,

re_result=re_result,

)

@@ -539,16 +570,20 @@ def _single_simulation(

else:

# Standard OLS path

results = analyze_func(

- X_expanded,

+ X_test,

y,

- metadata.target_indices,

+ test_target_indices,

self.alpha,

metadata.correction_method,

)

diagnostics = None

- # Extract results: [f_sig, uncorr..., corr..., (wald_flag)]

- n_targets = len(metadata.target_indices)

+ # Result array layout: [F_sig, uncorrected[n_targets], corrected[n_targets], wald_flag?]

+ # - F_sig (index 0): overall model F-test significance (1.0 or 0.0)

+ # - uncorrected[1..n]: per-target t-test significance without correction

+ # - corrected[n+1..2n]: per-target significance with multiple-comparison correction

+ # - wald_flag (optional, LME only): 1.0 if Wald test was used instead of LRT

+ n_targets = len(test_target_indices)

f_significant = bool(results[0])

uncorrected = results[1 : 1 + n_targets].astype(bool)

corrected = results[1 + n_targets : 1 + 2 * n_targets].astype(bool)

@@ -560,19 +595,19 @@ def _single_simulation(

wald_flag = bool(results[expected_len])

# Post-hoc pairwise contrasts (OLS path only)

- if metadata.posthoc_specs and cluster_ids is None:

+ if metadata.posthoc_specs and not use_lme:

from ..stats.ols import compute_posthoc_contrasts

ph_uncorr, ph_corr, regular_override = compute_posthoc_contrasts(

- X_expanded,

+ X_test,

y,

metadata.posthoc_specs,

metadata.posthoc_method,

metadata.posthoc_t_crit,

metadata.posthoc_tukey_crits,

- target_indices=metadata.target_indices,

+ target_indices=test_target_indices,

correction_method=metadata.correction_method,

- correction_t_crits_combined=getattr(metadata, "posthoc_correction_t_crits_combined", None),

+ correction_t_crits_combined=metadata.posthoc_correction_t_crits_combined,

)

# If FDR/Holm combined correction was applied, override regular corrected

@@ -645,7 +680,7 @@ class SimulationMetadata:

correction_method: Encoded multiple-comparison correction

(0=none, 1=Bonferroni, 2=BH, 3=Holm).

heterogeneity: SD of random effect-size multiplier.

- heteroskedasticity: Correlation between first predictor and error SD.

+ heteroskedasticity: Correlation between predicted values and error SD.

preserve_correlation: Upload correlation mode

(``"no"``/``"partial"``/``"strict"``).

uploaded_raw_data: Normalised raw data for strict-mode bootstrap.

@@ -728,6 +763,13 @@ def __init__(

self.posthoc_method: str = "t-test"

self.posthoc_tukey_crits: Dict[str, float] = {}

self.posthoc_t_crit: float = 0.0

+ self.posthoc_correction_t_crits_combined: Optional[np.ndarray] = None

+

+ # Test formula fields (for model misspecification testing)

+ self.test_column_indices: Optional[np.ndarray] = None

+ self.test_target_indices: Optional[np.ndarray] = None

+ self.test_effect_count: Optional[int] = None # p for critical value computation

+ self.test_has_random_effects: bool = False # Whether test formula has (1|group) etc.

def _compute_fixed_effect_variance(registry) -> float:

@@ -779,8 +821,13 @@ def _compute_fixed_effect_variance(registry) -> float:

factor_info = registry._factors[factor_name]

proportions = factor_info.get("proportions")

if proportions is not None:

- # level is 1-indexed; proportions list is 0-indexed

- p_k = proportions[level - 1]

+ level_labels = factor_info.get("level_labels")

+ if level_labels is not None:

+ # String level labels — look up position by label

+ p_k = proportions[level_labels.index(str(level))]

+ else:

+ # Integer levels are 1-indexed; proportions list is 0-indexed

+ p_k = proportions[level - 1]

else:

# Equal proportions (default)

n_levels = factor_info["n_levels"]

@@ -814,6 +861,7 @@ def prepare_metadata(

model,

target_tests: List[str],

correction: Optional[str] = None,

+ test_formula_effects: Optional[List[str]] = None,

) -> SimulationMetadata:

"""

Prepare simulation metadata from model state.

@@ -825,6 +873,9 @@ def prepare_metadata(

model: MCPowerModel instance

target_tests: List of effects to test

correction: Multiple comparison correction method

+ test_formula_effects: Optional list of effect names from a test

+ formula. When provided, the metadata will include column

+ indices for subsetting X_expanded to the test model.

Returns:

SimulationMetadata instance

@@ -960,8 +1011,6 @@ def prepare_metadata(

upload_data_values=model.upload_data_values if model.upload_data_values is not None else np.zeros((2, 2), dtype=np.float64),

effect_sizes=effect_sizes,

correction_method=correction_method,

- heterogeneity=model.heterogeneity,

- heteroskedasticity=model.heteroskedasticity,

preserve_correlation=model._preserve_correlation,

uploaded_raw_data=model._uploaded_raw_data,

uploaded_var_metadata=model._uploaded_var_metadata,

@@ -982,4 +1031,20 @@ def prepare_metadata(

metadata.posthoc_specs = model._posthoc_specs

metadata.posthoc_method = "tukey" if is_tukey_correction else "t-test"

+ # Test formula column subsetting

+ if test_formula_effects is not None:

+ from ..utils.test_formula_utils import _compute_test_column_indices, _remap_target_indices

+

+ # Get all non-cluster effect names in registry order

+ all_effect_names = [name for name in registry._effects if name not in registry.cluster_effect_names]

+

+ test_col_indices = _compute_test_column_indices(all_effect_names, test_formula_effects)

+ metadata.test_column_indices = test_col_indices

+ metadata.test_effect_count = len(test_col_indices)

+

+ # Remap target indices to X_test space

+ # Only remap targets that exist in the test formula

+ valid_targets = np.array([idx for idx in target_indices if idx in test_col_indices], dtype=np.int64)

+ metadata.test_target_indices = _remap_target_indices(valid_targets, test_col_indices)

+

return metadata

diff --git a/mcpower/core/variables.py b/mcpower/core/variables.py

index e311606..338d40b 100644

--- a/mcpower/core/variables.py

+++ b/mcpower/core/variables.py

@@ -367,76 +367,42 @@ def expand_factors(self) -> None:

level_labels = factor_info.get("level_labels")

reference_level = factor_info.get("reference_level", 1)

+ # Compute non-reference levels once

if level_labels is not None:

- # Named levels: skip the reference, create dummies for the rest

- non_ref_labels = [lb for lb in level_labels if lb != str(reference_level)]

- for label in non_ref_labels:

- dummy_name = f"{factor_name}[{label}]"

-

- # Create dummy predictor

- dummy_pred = PredictorVar(

- name=dummy_name,

- var_type="factor_dummy",

- is_dummy=True,

- factor_source=factor_name,

- factor_level=label,

- column_index=col_idx,

- level_labels=level_labels,

- )

- new_predictors[dummy_name] = dummy_pred

-

- # Create main effect for dummy

- dummy_eff = Effect(

- name=dummy_name,

- effect_type="main",

- var_names=[dummy_name],

- column_index=col_idx,

- factor_source=factor_name,

- factor_level=label,

- )

- new_effects[dummy_name] = dummy_eff

-

- # Store dummy mapping

- self._factor_dummies[dummy_name] = {

- "factor_name": factor_name,

- "level": label,

- }

-

- col_idx += 1

+ non_ref = [lb for lb in level_labels if lb != str(reference_level)]

else:

- # Original integer-indexed behavior

- for level in range(2, n_levels + 1):

- dummy_name = f"{factor_name}[{level}]"

-

- # Create dummy predictor

- dummy_pred = PredictorVar(

- name=dummy_name,

- var_type="factor_dummy",

- is_dummy=True,

- factor_source=factor_name,

- factor_level=level,

- column_index=col_idx,

- )

- new_predictors[dummy_name] = dummy_pred

-

- # Create main effect for dummy

- dummy_eff = Effect(

- name=dummy_name,

- effect_type="main",

- var_names=[dummy_name],

- column_index=col_idx,

- factor_source=factor_name,

- factor_level=level,

- )

- new_effects[dummy_name] = dummy_eff

+ non_ref = list(range(2, n_levels + 1))

+

+ for level in non_ref:

+ dummy_name = f"{factor_name}[{level}]"

+

+ dummy_pred = PredictorVar(

+ name=dummy_name,

+ var_type="factor_dummy",

+ is_dummy=True,

+ factor_source=factor_name,

+ factor_level=level,

+ column_index=col_idx,

+ level_labels=level_labels if level_labels is not None else None,

+ )

+ new_predictors[dummy_name] = dummy_pred

+

+ dummy_eff = Effect(

+ name=dummy_name,

+ effect_type="main",

+ var_names=[dummy_name],

+ column_index=col_idx,

+ factor_source=factor_name,

+ factor_level=level,

+ )

+ new_effects[dummy_name] = dummy_eff

- # Store dummy mapping

- self._factor_dummies[dummy_name] = {

- "factor_name": factor_name,

- "level": level,

- }

+ self._factor_dummies[dummy_name] = {

+ "factor_name": factor_name,

+ "level": level,

+ }

- col_idx += 1

+ col_idx += 1

# Handle interactions involving factors — Cartesian product of

# non-reference dummy levels across all factor components.

@@ -503,6 +469,13 @@ def get_effect_sizes(self) -> np.ndarray:

def get_var_types(self) -> np.ndarray:

"""Get variable types as numpy array (for data generation)."""

+ # Type codes: 0-5 are parametric distributions generated from scratch.

+ # 97/98/99 are sentinel codes for uploaded-data variables whose values

+ # come from bootstrapped/quantile-matched empirical data rather than

+ # parametric generation:

+ # 97 = uploaded_factor (factor from uploaded data)

+ # 98 = uploaded_binary (binary from uploaded data)

+ # 99 = uploaded_data (continuous from uploaded data)

type_mapping = {

"normal": 0,

"binary": 1,

@@ -717,28 +690,20 @@ def register_cluster(

def _reindex_predictors(self) -> None:

"""Reindex all predictors to maintain order: non_factor | cluster_effect | dummies."""

- col_idx = 0

+ non_factor = []

+ cluster = []

+ dummies = []

- # Non-factor predictors first

- for name in sorted(self._predictors.keys(), key=lambda x: self._predictors[x].column_index or 0):

- pred = self._predictors[name]

- if not pred.is_factor and not pred.is_dummy and pred.var_type != "cluster_effect":

- pred.column_index = col_idx

- col_idx += 1

-

- # Cluster effect predictors second

- for name in sorted(self._predictors.keys(), key=lambda x: self._predictors[x].column_index or 0):

- pred = self._predictors[name]

- if pred.var_type == "cluster_effect":

- pred.column_index = col_idx

- col_idx += 1

-

- # Factor dummies last

for name in sorted(self._predictors.keys(), key=lambda x: self._predictors[x].column_index or 0):

pred = self._predictors[name]

if pred.is_dummy:

- pred.column_index = col_idx

- col_idx += 1

+ dummies.append(pred)

+ elif pred.var_type == "cluster_effect":

+ cluster.append(pred)

+ elif not pred.is_factor:

+ non_factor.append(pred)

+

+ for col_idx, pred in enumerate(non_factor + cluster + dummies):

+ pred.column_index = col_idx

- # Update effect indices

self._update_effect_indices()

diff --git a/mcpower/model.py b/mcpower/model.py

index 4fa2c4b..9a5f813 100644

--- a/mcpower/model.py

+++ b/mcpower/model.py

@@ -123,10 +123,9 @@ def __init__(self, data_generation_formula: str):

self._pending_factor_levels: Optional[str] = None

self._pending_effects: Optional[str] = None

self._pending_correlations: Optional[Union[str, np.ndarray]] = None

- self._pending_heterogeneity: Optional[float] = None

- self._pending_heteroskedasticity: Optional[float] = None

self._pending_data: Optional[Dict[str, Any]] = None

self._pending_clusters: Dict[str, Dict] = {} # {grouping_var: {n_clusters, cluster_size, icc}}

+ self._effects_set: bool = False # True after set_effects() has been called

# Detect mixed model formula

if self._registry._random_effects_parsed:

@@ -134,8 +133,6 @@ def __init__(self, data_generation_formula: str):

# Applied state

self._applied = False

- self.heterogeneity = 0.0

- self.heteroskedasticity = 0.0

# Data storage

self.upload_normal_values: Optional[np.ndarray] = None

@@ -385,6 +382,7 @@ def set_effects(self, effects_string: str):

raise ValueError("effects_string cannot be empty")

self._pending_effects = effects_string

+ self._effects_set = True

self._applied = False

return self

@@ -432,13 +430,16 @@ def set_variable_type(self, variable_types_string: str):

- ``"normal"`` — standard normal (default).

- ``"binary"`` or ``"binary(p)"`` — Bernoulli with proportion *p*

(default 0.5).

- - ``"skewed"`` — heavy-tailed (t-distribution, df=3).

+ - ``"right_skewed"`` — positively skewed distribution.

+ - ``"left_skewed"`` — negatively skewed distribution.

+ - ``"high_kurtosis"`` — heavy-tailed (t-distribution, df=3).

+ - ``"uniform"`` — uniform distribution.

- ``"factor(k)"`` — categorical with *k* levels (creates *k-1*

dummy variables).

- ``"factor(k, p1, p2, ...)"`` — factor with custom level

proportions.

- Example: ``"x1=binary, x2=skewed, x3=factor(3)"``.

+ Example: ``"x1=binary, x2=right_skewed, x3=factor(3)"``.

Returns:

self: For method chaining.

@@ -479,62 +480,6 @@ def set_factor_levels(self, spec: str):

self._applied = False

return self

- def set_heterogeneity(self, heterogeneity: float):

- """Set heterogeneity (random variation) in effect sizes.

-

- When non-zero, each simulation draws a per-simulation effect-size

- multiplier from a normal distribution with mean 1 and the given

- standard deviation. This models uncertainty about the true effect

- size — for example, ``heterogeneity=0.1`` means effect sizes vary

- by roughly +/- 10% across simulations.

-

- This setting is deferred until ``apply()`` is called.

-

- Args:

- heterogeneity: Standard deviation of the random effect-size

- multiplier. Must be non-negative. Default is 0 (no variation).

-

- Returns:

- self: For method chaining.

-

- Raises:

- TypeError: If *heterogeneity* is not numeric.

- """

- if not isinstance(heterogeneity, (int, float)):

- raise TypeError("heterogeneity must be a number")

-

- self._pending_heterogeneity = float(heterogeneity)

- self._applied = False

- return self

-

- def set_heteroskedasticity(self, heteroskedasticity_correlation: float):

- """Set heteroskedasticity (non-constant error variance).

-

- Introduces a correlation between the first predictor's values and

- the error standard deviation, producing variance that increases (or

- decreases) with the predictor. This violates the homoskedasticity

- assumption and typically reduces power.

-

- This setting is deferred until ``apply()`` is called.

-

- Args:

- heteroskedasticity_correlation: Correlation between the first

- predictor and the error standard deviation, in the range

- [-1, 1]. Default is 0 (homoskedastic errors).

-

- Returns:

- self: For method chaining.

-

- Raises:

- TypeError: If the value is not numeric.

- """

- if not isinstance(heteroskedasticity_correlation, (int, float)):

- raise TypeError("heteroskedasticity_correlation must be a number")

-

- self._pending_heteroskedasticity = float(heteroskedasticity_correlation)

- self._applied = False

- return self

-

def set_cluster(

self,

grouping_var: str,

@@ -769,6 +714,7 @@ def upload_data(

"preserve_factor_level_names": preserve_factor_level_names,

}

self._applied = False

+ return self

def set_scenario_configs(self, configs_dict: Dict):

"""Set custom scenario configurations for robustness analysis.

@@ -786,7 +732,9 @@ def set_scenario_configs(self, configs_dict: Dict):

configs_dict: Mapping of scenario names to configuration dicts.

Each configuration may include keys such as

``"heterogeneity"``, ``"heteroskedasticity"``,

- ``"effect_size_jitter"``, and ``"distribution_jitter"``.

+ ``"correlation_noise_sd"``, and ``"distribution_change_prob"``.

+ See ``DEFAULT_SCENARIO_CONFIG`` in ``mcpower.core.scenarios``

+ for the full list of keys.

Returns:

self: For method chaining.

@@ -802,7 +750,8 @@ def set_scenario_configs(self, configs_dict: Dict):

if scenario in merged:

merged[scenario].update(config)

else:

- merged[scenario] = config

+ # New custom scenarios inherit all keys from optimistic baseline

+ merged[scenario] = {**DEFAULT_SCENARIO_CONFIG["optimistic"], **config}

self._scenario_configs = merged

print(f"Custom scenario configs set: {', '.join(configs_dict.keys())}")

@@ -812,7 +761,7 @@ def set_scenario_configs(self, configs_dict: Dict):

# Apply method (processes all pending settings)

# =========================================================================

- def apply(self):

+ def _apply(self):

"""

Apply all pending settings to the model.

@@ -857,16 +806,22 @@ def apply(self):

# 7. Apply correlations

self._apply_correlations(_parser)

- # 8. Apply heterogeneity/heteroskedasticity

- self._apply_heterogeneity()

-

- # 9. Validate model is ready

+ # 8. Validate model is ready

model_result = _validate_model_ready(self)

model_result.raise_if_invalid()

- # Invalidate effect plan cache when settings change (Phase 2 optimization)

+ # Invalidate the effect plan cache — apply() rebuilds the variable

+ # registry state, so any cached column mappings are now stale.

self._effect_plan_cache = None

+ # Clear pending state to prevent double-application

+ self._pending_variable_types = None

+ self._pending_factor_levels = None

+ self._pending_effects = None

+ self._pending_correlations = None

+ self._pending_data = None

+ self._pending_clusters = {}

+

self._applied = True

print("Model settings applied successfully")

return self

@@ -1024,6 +979,21 @@ def _apply_data(self):

# Extract matched data

matched_data = data[:, matched_indices]

+ # Reject NaN values early

+ try:

+ if np.isnan(matched_data.astype(np.float64)).any():

+ nan_cols = [

+ matched_columns[i] for i in range(matched_data.shape[1]) if np.isnan(matched_data[:, i].astype(np.float64)).any()

+ ]

+ raise ValueError(

+ f"Uploaded data contains NaN values in columns: {', '.join(nan_cols)}. "

+ f"Remove or impute missing values before uploading."

+ )

+ except (ValueError, TypeError):

+ # Object dtype columns (strings) can't be converted to float for NaN check.

+ # NaN check for numeric columns will happen after string encoding below.

+ pass

+

# Convert to float64 if object dtype (common with mixed-type DataFrames)

# String columns are encoded to integer indices; mapping is stored in string_col_indices

string_col_indices = {}

@@ -1178,11 +1148,7 @@ def _apply_data_normal_mode(self, data, columns, type_info, mode, data_types_ove

level_labels = info.get("level_labels")

# Determine reference from data_types tuple override

- reference_level = None

- if col in data_types_override:

- dt = data_types_override[col]

- if isinstance(dt, tuple) and len(dt) == 2:

- reference_level = str(dt[1])

+ reference_level = self._extract_reference_level(data_types_override, col)

# Calculate proportions for each level

proportions = []

@@ -1200,7 +1166,10 @@ def _apply_data_normal_mode(self, data, columns, type_info, mode, data_types_ove

else: # continuous

# Normalize: mean=0, sd=1

- normalized = (col_data - np.mean(col_data)) / np.std(col_data, ddof=1)

+ std = np.std(col_data, ddof=1)

+ if std < 1e-15:

+ raise ValueError(f"Column '{col}' has zero variance (constant value). Remove it from the model or check your data.")

+ normalized = (col_data - np.mean(col_data)) / std

# Create lookup tables (type 99)

normal_vals, uploaded_vals = create_uploaded_lookup_tables(normalized.reshape(-1, 1))

@@ -1324,11 +1293,7 @@ def _apply_data_strict_mode(self, data, columns, type_info, data_types_override=

level_labels = info.get("level_labels")

# Determine reference from data_types tuple override

- reference_level = None

- if col in data_types_override:

- dt = data_types_override[col]

- if isinstance(dt, tuple) and len(dt) == 2:

- reference_level = str(dt[1])

+ reference_level = self._extract_reference_level(data_types_override, col)

self._uploaded_var_metadata[col] = {

"type": "factor",

@@ -1355,7 +1320,10 @@ def _apply_data_strict_mode(self, data, columns, type_info, data_types_override=

continuous_cols.append(idx)

# Normalize

col_data = data[:, idx]

- normalized_data[:, idx] = (col_data - np.mean(col_data)) / np.std(col_data, ddof=1)

+ std = np.std(col_data, ddof=1)

+ if std < 1e-15:

+ raise ValueError(f"Column '{col}' has zero variance (constant value). Remove it from the model or check your data.")

+ normalized_data[:, idx] = (col_data - np.mean(col_data)) / std

self._uploaded_var_metadata[col] = {

"type": "continuous",

@@ -1481,22 +1449,6 @@ def _apply_correlations(self, _parser):

self._registry.set_correlation_matrix(correlations_input)

print("Correlation matrix set")

- def _apply_heterogeneity(self):

- """Validate and apply pending heterogeneity and heteroskedasticity settings."""

- if self._pending_heterogeneity is not None:

- if self._pending_heterogeneity < 0:

- raise ValueError("heterogeneity must be non-negative")

- self.heterogeneity = self._pending_heterogeneity

- if self.heterogeneity > 0:

- print(f"Heterogeneity: SD = {self.heterogeneity}")

-

- if self._pending_heteroskedasticity is not None:

- if not -1 <= self._pending_heteroskedasticity <= 1:

- raise ValueError("heteroskedasticity_correlation must be between -1 and 1")

- self.heteroskedasticity = self._pending_heteroskedasticity

- if abs(self.heteroskedasticity) > 1e-8:

- print(f"Heteroskedasticity: correlation = {self.heteroskedasticity}")

-

# =========================================================================