Conversation

|

I'm happy as long as @giulio-giunta is happy |

|

code review for the infra branch : br_cloud_switcher on the geek.zone/infra reviewer : Guilio Gunta (GG) start time : 8.30pm - 10th July items raised by GG:

|

|

I think the folder you created and named "Terraform" should have a more specific name to avoid confusion with the terraform folders inside the aws and azure folders. I don't think there is a need to create an extra image for dependencies when you already have geekzone/infra... which as you said makes the ci pipeline faster. Regarding the next code review, no need to have one reviewer + one observer + the owner: I had already reviewed your work when you raised the same PR in the web repo, but you never came back. Then you asked for my review again, so today I decided to have a meeting with you to show that there was no test backing your PR and to explain my points to you. However, if you don't like my suggestions, it doesn't have to be necessarily me reviewing your work! |

@giulio-giunta:

|

|

Sorry for coming back after a while, but I've had a very busy week! I think building our own executor in a docker image, with all the dependencies needed for our cicd pipeline, is a great thing as that makes the pipeline faster and gives us full control of what we run our code on. We can even scan that image with Snyk and make sure there are no vulnerabilities! Bala, it's true that using a docker image was my suggestion in the first place, but you agreed it makes the pipeline faster. Why having a faster pipeline is not important for you anymore? You said there was no public IP address assigned to the ec2 instance where the health checks for the k8s cluster are performed, but I see the opposite in line 139 in cloud-switcher/main.tf and the Sonarcloud check failed just because of that: https://sonarcloud.io/project/security_hotspots?id=GeekZoneHQ_infra&pullRequest=37&resolved=false&types=SECURITY_HOTSPOT Finally, I don't take anything personal either, but I've been advised to reply to comments in a PR when it has been reviewed. I made 10 comments, but you replied only to 1. All the others were ignored: GeekZoneHQ/web#600 So, I think we don't need to have meetings with one reviewer + one observer + the owner, if we can use the tools Github offer to collaborate in a simple and communicative way. |

|

By the way, the pipeline succeeded, but I think to fully test the work we should see a new cluster being created when the health checks fail. Has it been created? |

|

@giulio-giunta: <<< Bala, it's true that using a docker image was my suggestion in the first place,

<<< You said there was no public IP address assigned to the ec2 instance where the health checks for the k8s <<< Finally, I don't take anything personal either, but I've been advised to reply to comments in a PR when it So, I think we don't need to have meetings with one reviewer + one observer + the owner, if we can use the tools Github offer to collaborate in a simple and communicative way. |

Yes. I need you help in checking whether the cluster is spun in Azure. |

|

|

|

I haven't created a baked AMI, but if you want I can do that. Regarding the geekzone/infra image we need to clarify the following:

If you're still testing, maybe the PR should be called "draft". For the other 9 unreplied comments you can check again here |

I think this should show in Circleci UI. Btw, do you have access to Azure? |

|

SonarCloud Quality Gate failed.

|

Description

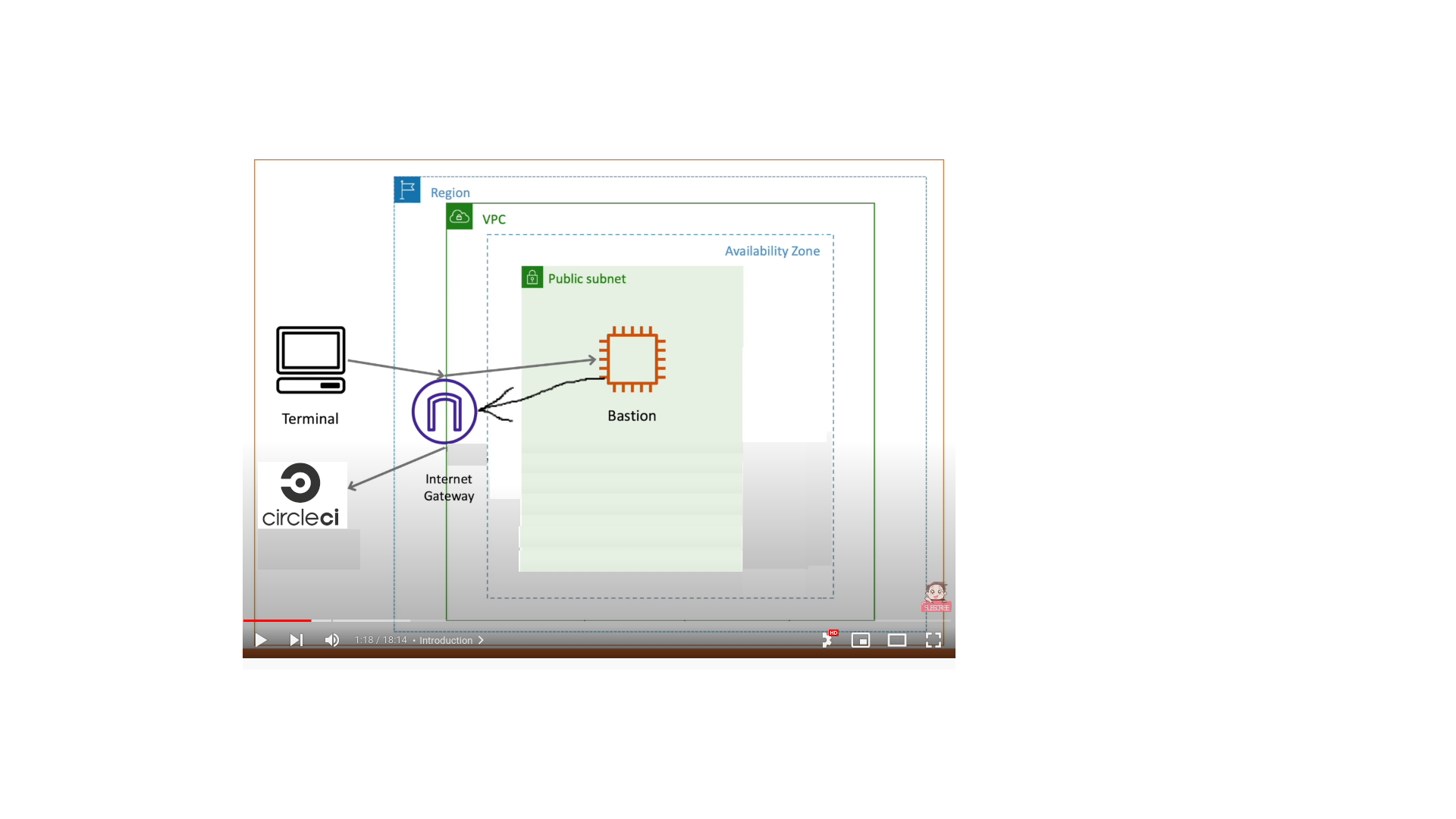

High availability of the GZ:

Healtcheck instance is spun off in AWS 'us-east-1' region, keeps an 'eye' on the GZ. when the site is down,

invokes the infra in Azure, to kep the site running uninterrupted.

Related Issue

issue 594, in the webrepo

issue 36, in the infra repo

Motivation and Context

provide the much needed high availability via orchestratiion of the multi cloud setup.

How Has This Been Tested?

spun up the cluster and tested it

Types of changes

Checklist: