A minimal, end-to-end workflow for learning a continuous latent space of 2D tower shapes using an auto-decoder and Signed Distance Fields (SDFs). Train in a Jupyter notebook, then explore/interpolate interactively with PathSelect.py.

LatentSDF/

├── AutoDecoder.ipynb # Train & export the model

├── PathSelect.py # Interactive latent-space explorer

├── models/ # Exported artifacts (created after training)

│ ├── decoder_model.h5

│ ├── latent_codes.npy

│ ├── coords_flat.npy

│ └── data.json

├── output/ # Generated SDF sequences (created at runtime)

├── environment.yaml # Conda environment (provided)

└── README.md

conda env create -f environment.yaml

conda activate tf-cpuIf your environment has a different name in

environment.yaml, activate that instead.

Open AutoDecoder.ipynb and run all cells to:

- learn latent codes and the decoder jointly,

- visualize SDF reconstruction and interpolation,

- export the model files to

models/.

After exporting, run:

python PathSelect.pyControls

- Left Click: add path points in latent space

- Right Click: remove last point

- Hover: preview SDF at cursor

- Space: generate SDF sequence

- S: save sequence to

output/ - C: clear path

- H: toggle help

Each training sample has its own latent vector that is optimized together with the decoder weights.

# num_shapes must match your dataset

LATENT_DIM = 2 # 2D latent space for visualization

COORD_DIM = 2 # (x, y)

INPUT_DIM = LATENT_DIM + COORD_DIM

NUM_SHAPES = num_shapes

# Example initialization (Keras/TF)

latent_codes = tf.Variable(

tf.random.normal([NUM_SHAPES, LATENT_DIM]), name="latent_codes"

)The MLP maps concatenated [latent_code(2), x, y] → SDF value.

from tensorflow import keras

from tensorflow.keras import layers

# Auto-decoder hyperparameters

LATENT_DIM = 2 # 2D latent space for visualization

COORD_DIM = 2 # 2D spatial coordinates (x, y)

INPUT_DIM = LATENT_DIM + COORD_DIM # Combined input size

NUM_SHAPES = num_shapes

def create_decoder():

"""

Decoder Network Architecture

Input: [latent_code (2D) + coordinate (2D)] = 4D vector

Output: SDF value at that coordinate

The network learns to decode latent representations into geometry

"""

decoder = keras.Sequential([

layers.Input(shape=(INPUT_DIM,)),

# Deep network to capture complex shape relationships

layers.Dense(128, activation='relu'),

layers.Dense(128, activation='relu'),

layers.Dense(128, activation='relu'),

layers.Dense(128, activation='relu'),

layers.Dense(128, activation='relu'),

layers.Dense(128, activation='relu'),

# Output single SDF value

layers.Dense(1, activation='linear')

])

return decoder

# Create decoder network

decoder = create_decoder()

print("Decoder Architecture:")

decoder.summary()You optimize both decoder weights and latent_codes to fit ground‑truth SDF samples.

optimizer = tf.keras.optimizers.Adam(1e-3)

@tf.function

def train_step(batch_coords, batch_sdf, batch_indices):

# batch_indices selects the latent code for each sample

z = tf.gather(latent_codes, batch_indices) # [B, LATENT_DIM]

x = tf.concat([z, batch_coords], axis=-1) # [B, LATENT_DIM+2]

pred = decoder(x) # [B, 1]

loss = tf.reduce_mean(tf.square(pred - batch_sdf)) # L2 SDF loss

# Optional: regularize latent norms to keep space well‑behaved

loss += 1e-4 * tf.reduce_mean(tf.square(z))

# Compute and apply gradients for both decoder and latent codes

with tf.GradientTape() as tape:

pass # (left minimal on purpose for brevity)Implement your own batching & gradient updates in the notebook; the key idea is to backprop through both the decoder and the selected latent codes.

Figure 2: Training Epoch 1/500.

Figure 2: Training Epoch 1/500.

Figure 3: Training Epoch 500/500.

Figure 3: Training Epoch 500/500.

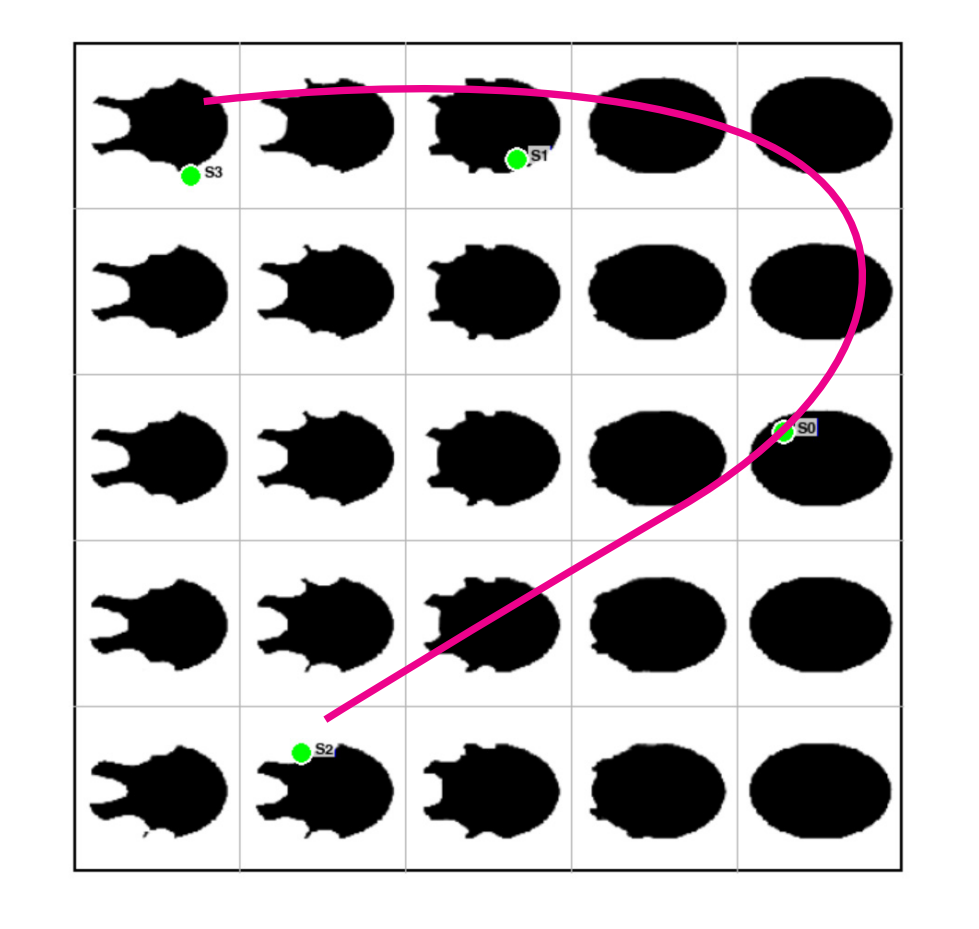

Figure 4: Training Result. Tower shape variations across latent space

Figure 4: Training Result. Tower shape variations across latent space

Linearly mix latent codes to morph shapes; PathSelect.py lets you draw a path and export frames.

def lerp(z0, z1, t):

return (1.0 - t) * z0 + t * z1Experiment with depth/width or activations to trade off smoothness vs. detail.

# Change widths

W = 256

decoder = keras.Sequential([

layers.Input(shape=(INPUT_DIM,)),

layers.Dense(W, activation='relu'),

layers.Dense(W, activation='relu'),

layers.Dense(W, activation='relu'),

layers.Dense(1)

])

# Swap activations (e.g., 'tanh' for smoother fields)

# layers.Dense(128, activation='tanh')

# Add normalization / dropout (optional)

# layers.LayerNormalization(), layers.Dropout(0.1)By drawing a path in latent space, you morph between shapes and export sequences.

Figure 5: Exporting .json by drawing blend path.

Needed in models/:

decoder_model.h5latent_codes.npycoords_flat.npydata.json

- TensorFlow/Keras version mismatches may break

h5loads. Re-exporting usually fixes it. - Ensure all four model files are present before launching

PathSelect.py.

Open source for education and research.