This is my fork of PhysTwin, extended with a full data collection and processing pipeline for bimanual cloth manipulation using my custom SO-101 robot platform (the Sew Unit).

The goal: fit PhysTwin's particle-based cloth simulator to real fabric by running it on data I collected myself, rather than the original benchmark data.

PhysTwin was originally built around a different robot and data format. I wrote the full pipeline to get it working with my setup:

data_process/so101_to_phystwin.py— converts raw RGB-D recordings from the Sew Unit's 4-camera setup into the particle track format PhysTwin trains ondata_process/data_process_sample.py/data_process_track.py— updated to handle SO-101 gripper geometry and the ChAruco-calibrated multi-camera rigregen_controller_masks.py— regenerates controller masks (gripper segmentation) when HSV thresholding fails under changing lab lighting; replaced with CoTracker 3 for robustnessexport_gaussian_data_so101.py— exports Gaussian splatting training data in the format expected by the downstream PGND pipelineexport_ply.py— exports point cloud PLY files for external visualization and validationpgnd_to_phystwin.py— converts PGND episode format back into PhysTwin format for cross-pipeline evaluationtrain_phystwin_cloth.sh— training script configured for cloth trajectories on the SO-101 setup

Scripts for generating and visualizing bimanual manipulation trajectories used during data collection and evaluation:

generate_dual_v5.py/v5b/v6/v7— bimanual pull-apart, push-together, fold, and lift trajectories (iterated versions as the robot setup evolved)generate_single_gripper.py/generate_single_gripper_right02.py— single-arm baseline trajectoriesgenerate_triptych.py— side-by-side comparison visualization: real RGB | point cloud | PhysTwin predictiontest_lift.py/test_lift_4v.py/test_lifts.py/test_single.py— live trajectory test scripts used during hardware debugging

The data was collected on the Sew Unit — a bimanual cloth manipulation platform I built from scratch:

- Two SO-101 arms (LeRobot platform) mounted inverted on a custom aluminum extrusion frame

- 4x Intel RealSense cameras (D435i + D405) with ChAruco calibration (best stereo pair: 0.68 RMS reprojection error)

- Ender 3 printer bed as workspace

- STS/SCS servo motors, unified serial controller

Full hardware writeup: tabithako.github.io/projects/sew-unit

11 annotated bimanual cloth manipulation trajectories collected on the Sew Unit. Each trajectory includes:

- Synchronized RGB-D from 4 cameras at 30 Hz

- Robot joint states and gripper poses

- Controller masks (gripper segmentation) — generated via CoTracker 3

- Particle tracks exported for PhysTwin training

PhysTwin fit to a single training trajectory successfully learns cloth physical parameters (stiffness, damping, mass) and generalises to novel bimanual actions not seen during training.

Training trajectory — particle simulation fit to a real cloth episode:

sew-unit-dual-pull-apart.mp4

Robot executing a recorded bimanual trajectory: video · Leader-follower teleoperation: video

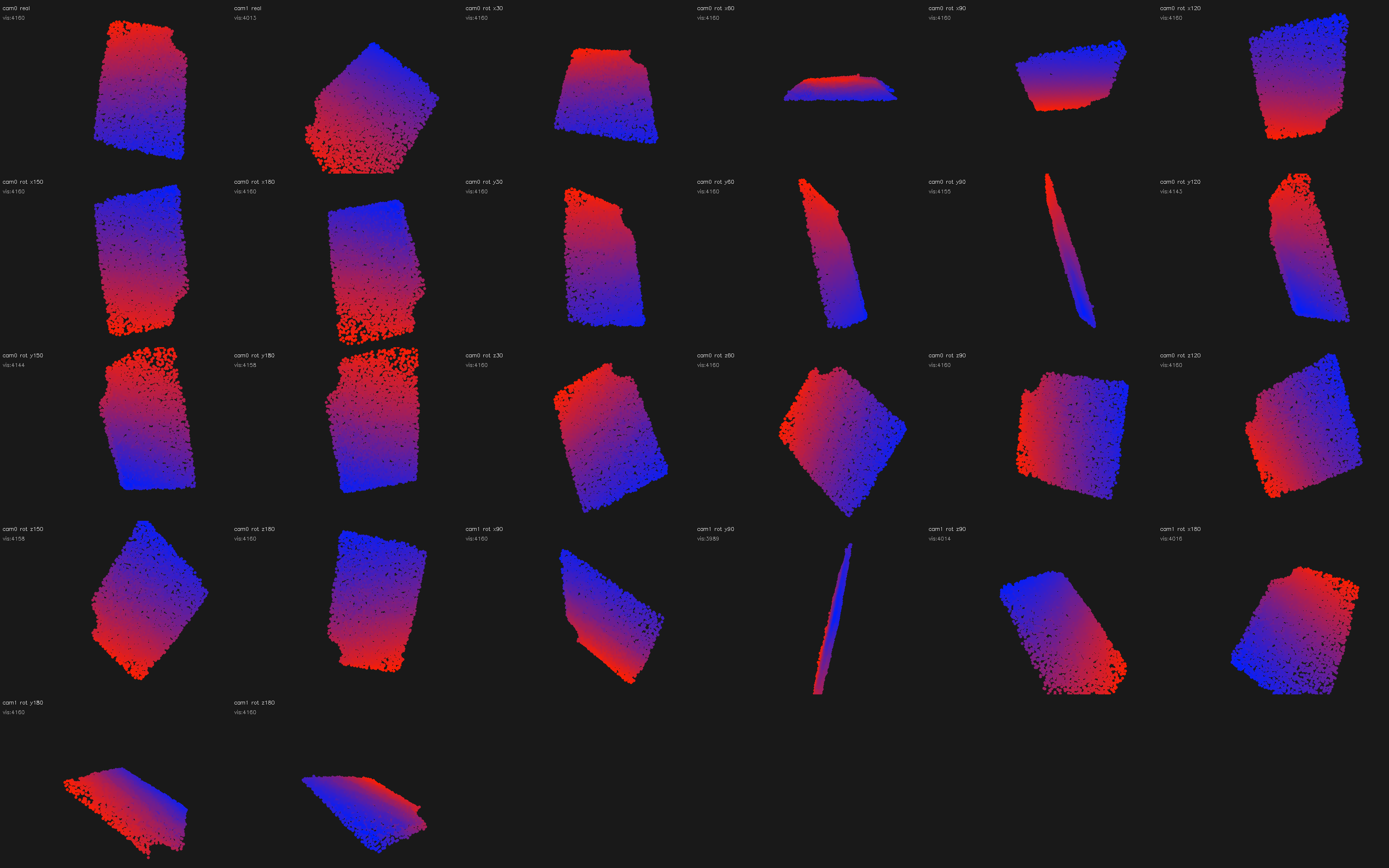

Triptych validation (real RGB | point cloud | PhysTwin prediction):

cloth-dynamics-triptych-cam1.mp4

cloth-dynamics-triptych-cam0.mp4

Novel actions generated with fitted cloth parameters:

sew-unit-dual-push-together.mp4

Fold left over right — bimanual pull-apart video · push-together video

Full writeup: tabithako.github.io/projects/cloth-dynamics

PhysTwin: Physics-Informed Reconstruction and Simulation of Deformable Objects from Videos Jianglong Ye et al. — project page