fix(spike): Replace posh with reactive DS#665

fix(spike): Replace posh with reactive DS#665lambduhh wants to merge 3 commits intoathensresearch:masterfrom

Conversation

|

|

||

|

|

||

| (d/listen! dsdb :history | ||

| #_(d/listen! dsdb :history |

There was a problem hiding this comment.

causing app to crash, probably due to difference in spec between posh/ds. commented out as short fix just to get app running to test

| (dissoc :merge-prompt)) | ||

| :timeout {:action :clear | ||

| :id :merge-prompt}}))) | ||

| (try |

There was a problem hiding this comment.

same issue as above, short fix just to test

|

|

||

| ;; TODO: move all these DOM utilities to a .cljs file instead of cljc | ||

| (defn scroll-top! [element pos] | ||

| (defn scroll-top! |

There was a problem hiding this comment.

cljsstyle did this?

|

|

||

|

|

||

| (d/listen! dsdb :devtool/open listener) | ||

| #_(d/listen! dsdb :devtool/open listener) |

There was a problem hiding this comment.

short fix, regressions

| (ns athens.views.graph-page | ||

| (:require | ||

| ["react-force-graph" :as rfg] | ||

| #_["react-force-graph" :as rfg] |

There was a problem hiding this comment.

couldn't get this working locally, short fix

|

I've marked the somewhat irrelevant changes that I made to fix regressions in the short term (just to get working so we can test), may even need help putting everything back together but it didn't make sense to put in any extra time before bringing to the team for review and thoughts. Let me know what you think! |

|

It doesn't look like |

There was a problem hiding this comment.

https://www.loom.com/share/189603c0e90947359700061563960a60

Main points from the loom

- The initial prototype could've been 95% fewer LoC changed

- Could've thought through places that might be good to test performance before starting this out! (or asking)

Next steps: start a new branch with only 1 place where a posh query is replaced with the make-reaction. A/B test the performance with a transaction that should lead to a reaction.

|

Thanks for the code review! I appreciate your analysis of thinking like a scientist. Inspires me to tell the story of this PR and structure my response in the same way? Observation Question Additional Information

Hypothesis Testing Trivial

Could use the time or simple-benchmark macros to measure individual runtimes of functions when running athens with small amounts of data vs large amounts of data. (Note: We should get some sort a standard file that represents the working definition of “large amount of data” that can be used as a benchmark for upgrades) The value proposition of Posh is that it performs a series of calculations to attempt to determine if a query/pull that has been executed previously and needs to be recalculated due to new transactions or, if a cached version of the query can be returned instead. With no intermediate transactions, all supported Posh pulls and queries will be memoized.

Therefore, Posh is most suitable for long sessions of analytical workloads where

There is an inflection point of expected data complexity and churn beyond which the speed of Datascript (which is currently the fastest known in-memory datalog processing engine (even faster that Datomic-mem), outweighs the possible values from cached posh queries. Determining if an application is above that threshold cannot be determined with trivial testing, it must be tested based on the behavior of the actual application. RealisticComparing Posh v DS in a realistic scenario involves comparing the behavior of the application with a large volume of data under normal and abnormal operating conditions (using methods listed above) Since Athens is a heavy user input application, there is a significant amount of data churn. Therefore, there is a strong reason to suspect that reactive datascript (since it skips the extra indexing and cache check calculations) would be more performant than posh.Why is it not possible to replace only a subset of the functionality with Reactive Datascript? (as suggested in the PR as an minimum viable prototype)

Note that

Therefore, the only way to test the change in realistic circumstances is to isolate all the Posh functions with equivalent reactive Datascript calls, as the |

|

Really great write-up! I'm stuck on two assumptions:

Does it have to be true that heavy user input ~> data churn? What if the user input was all on the same page? In that case, it seems that a lot of the caching would be useful.

My understand is that the way Reagent/React works is that only the queries on the Virtual DOM are relevant. Changes to data ~> re-compute necessary available subscriptions/reactions ~> necessary UI changes. For instance, And

If I'm understanding this correctly, you're saying we wouldn't be able test the performance of replacing one of these posh queries with a different query? Regarding "large data", there are probably two main contexts. One would be if the current page had a lot of data. One would be if the db was large, but the current page was negligibly small. For the former, we could use Jake's note, which he volunteered in Discord: https://discord.com/channels/708122962422792194/709246147549593601/809965655302340621. I copied and pasted this into this branch, but it seemed to crash Athens. I'm guessing it's a parser issue. For the latter, we could use upload a large public Roam, or just have large enough db. My current I think the biggest missing piece is how to actually benchmark performance. Would love to hear your thoughts on all the above! |

user-input → data churnI agree completely with the point that having a cache is useful. I A few points to consider on this:

When do components update?For an authoritative answer on this question, here is the official The important thing to note is that Why can't we test one of the queries in isolation?We can test non-reactive queries in isolation, but we can't testreactive queries in isolation without introducing a data access layer of abstraction. This is because the reactive datascript reagent/atom and the posh tree are fundamentallyincompatible. Data access layer of abstractionWhile not the point of this PR, it's not a bad practice do adopt a Example table Specific testAlso note that in this case, it is not possible to test reactive not The Testing proposalsI like the idea of testing using a standard DB. Ultimately I think that An important note here is that using This comes back to the undesirable two-layer cache with Therefore if we are encountering behavioral bottlenecks, ("clicking X The important thing, I think, is not the load time, but the user So I think we should go with your suggestion of testing using a DB that Then we can open a parallel reactive Datascript branch and work to bring Once we are convinced there are no regressions and the user experience |

|

This is really interesting @lambduhh. Thank you for your deep dive on all this!! For tiered subscriptions, are you suggesting tiers of datascript queries? That's interesting.

I agree here, I'm not 100% certain we need a cache yet.

I don't understand this one. Why wouldn't it be able to swap just this out? Either way, I don't think all-pages is actually a good query to test. You make the point later on that UX matters more than benchmarks. In this case, UX around writing is more important than all-pages.

Great point. 100% agree

This implementation would definitely explain why That being said, I wasn't able to test this on my personal DB without it crashing (https://www.loom.com/share/98dcfc61c84344dea3b22ccc1dafa232). I don't know the best way to get you a large db, other than downloading a large public one and then importing it off of this branch #561. I don't totally understand how |

|

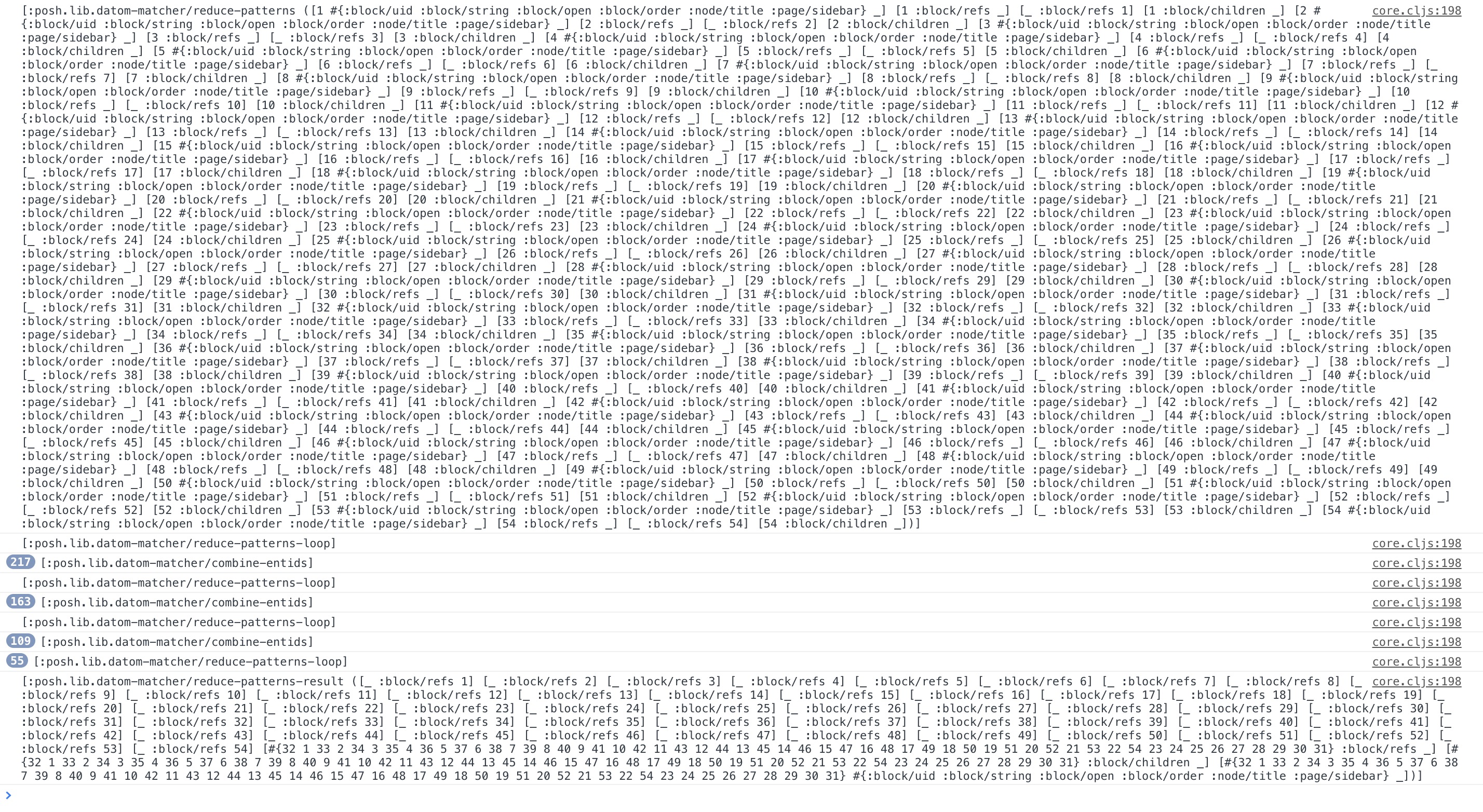

I did a little snooping and found there is a significant constant overhead with

[[:db/add [:block/uid "6aecd4172"] :block/open true]]

(let [more-tx-data (parse-for-links with-tx)

final-tx-data (vec (concat tx-data more-tx-data))]

(pprint final-tx-data)

(let [fake-db (-> (d/datoms @db/dsdb :eavt)

(d/init-db db/schema)

(d/conn-from-db))]

(prn "first datascript transact!")

(time (d/transact! fake-db final-tx-data))

(prn "second datascript transact!")

(time (d/transact! fake-db final-tx-data)))

(prn "first posh transact!")

(time (transact! db/dsdb final-tx-data))

(prn "second posh transact!")

(time (transact! db/dsdb final-tx-data))

(let [outputs (:tx-data (transact! db/dsdb final-tx-data))]

(ph-link-created! outputs)))

Looks to me like something is adding quite a lot of overhead for a seemingly simple update (the second runs measure noop overhead). This is time spent toggling a flag, before we even start parsing and rendering the blocks; seems to be a potentially janky experience even without lots of data. If you've got a bigger database lying around, I wonder if the overhead is constant/linear/exponential with more data (irrespective of how small is the transaction change). And perhaps the problem is not with posh itself, but with a subscription? No idea, have not explored this further. But maybe this will be useful anecdote as a jumping off point for further digging. :) |

|

From @pithyless on discord pasting so all info is in one place: PSS. I forgot to mention it in the PR, but to pushing for a "Data access layer of abstraction"; this level of indirection would really help with identifying performance bottlenecks, testing alternative strategies, but also documenting where the app is changing state." totally agree with both points 💯 |

|

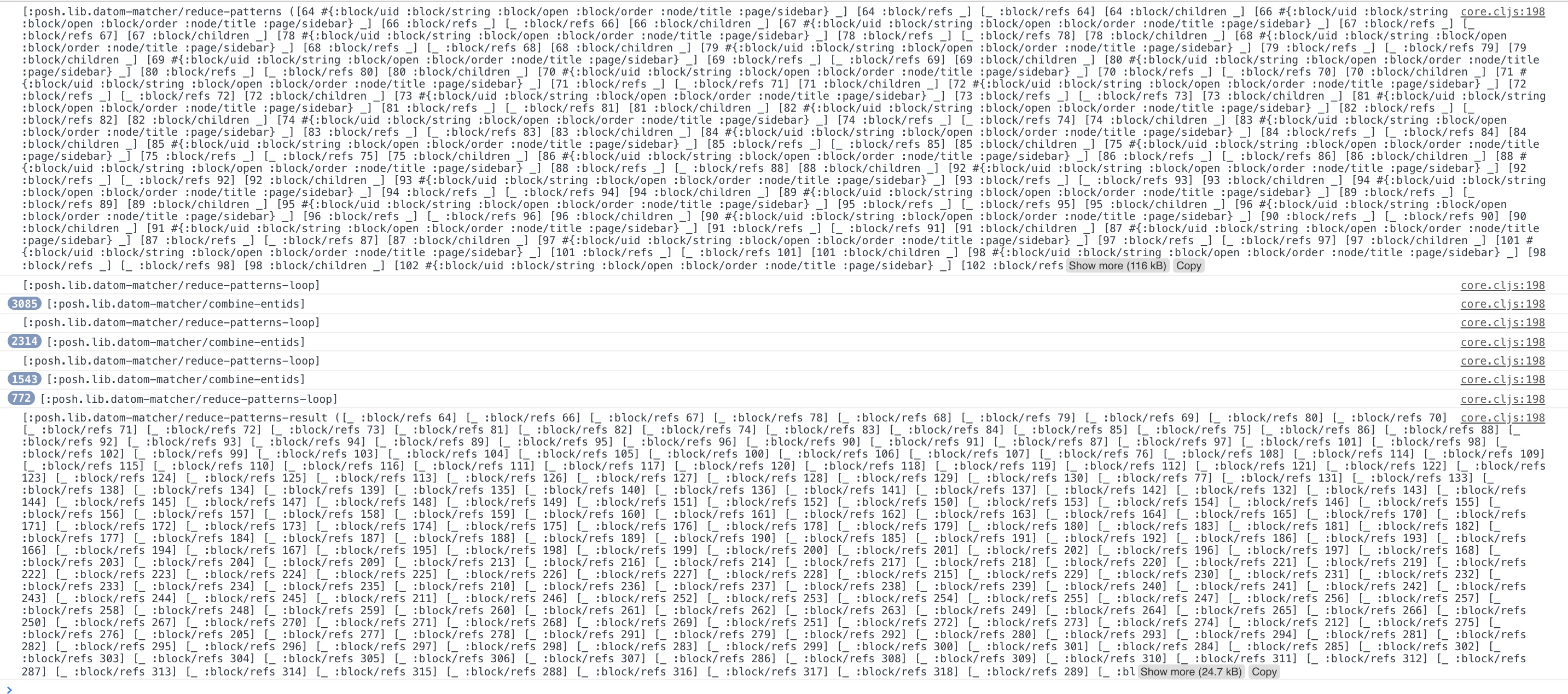

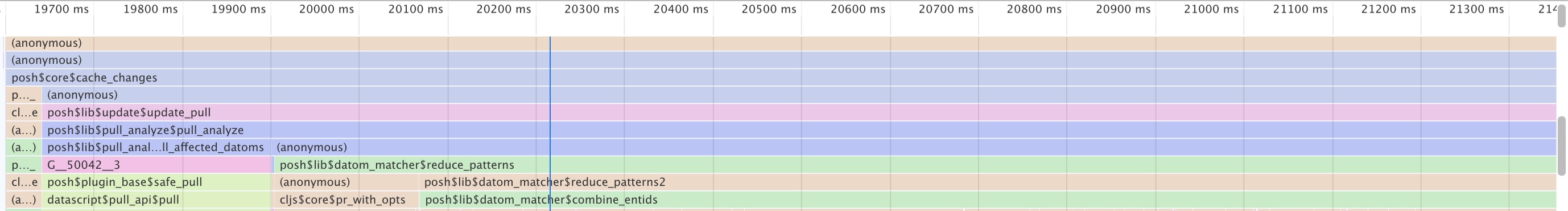

I dug a little further into why things are taking so long. There exists a function This is how often a single show/hide block action will trigger the recursion on the Welcome page: And this is the same action, but on a much larger markdown file: Essentially, This kind of detailed caching strategy is appropriate when querying the database is prohibitively expensive, but that's not the case for Athens. It's also in stark contrast to the approach e.g. Fulcro uses, where it only tracks which ids may have changed and then queries the DB directly. All the recursions, conses, seqs, mapcats, and concats made me also wonder about how much GC pressure is being generated by this kind of operation. And sure enough, it's clearly visible (screenshot includes some debugging vars and prn; feel free to ignore those): Turns out calculating which attributes may have changed in this specific example was 8x more expensive than the actual datascript query to pull all that data back out. This is definitely something that could be potentially refactored and improved upstream in |

|

Is this still being worked on @lambduhh? If no, I'm sure someone would be happy to build off of it. My DB is getting really slow 😿 |

|

Yeah since time is a concern may be quicker to let somebody else take the reins (I've been preoccupied w death in my family). Perhaps @pithyless ? |

|

Sorry to hear that. Let us know if you need anything ❤️ |

|

@lambduhh First and foremost, take care of yourself and your family. ❤️ I think I can find the time to take this PR off your hands and to run with it. |

Solution was originally proposed by @lambduhh in athensresearch#665. See PR discussion for details why posh is considered slow and a mismatch for Athens UI performance right now. This version of the solution introduces a new namespace `athens.posh` as a form of indirection. This way the rest of the codebase does not need any further changes (aside from the namespace require) and also allows easy switching between the two different databases to compare performance and correctness (via `athens.posh/version`). The assumption is that later this hybrid duality can be elided and `athens.posh` refactored into a more robust data fetching layer.

Solution was originally proposed by @lambduhh in athensresearch#665. See PR discussion for details why posh is considered slow and a mismatch for Athens UI performance right now. This version of the solution introduces a new namespace `athens.posh` as a form of indirection. This way the rest of the codebase does not need any further changes (aside from the namespace require) and also allows easy switching between the two different databases to compare performance and correctness (via `athens.posh/version`). The assumption is that later this hybrid duality can be elided and `athens.posh` refactored into a more robust data fetching layer.

Proposed changes to eliminate posh from the code base since it has known performance issues. This replaces with reactive datascript.

athens.rxdbis meant to be a drop in replacement for posh with the exception thatdb/dsdbdoesn't need provided as argument.There seems to be a lot of regression errors that result from posh functions returning different spec than datascript, these can be fixed if it is determined that the performance upgrade is worth it. Seemed like a good point to stop and check before going any further.

@tangjeff0 Please pull down branch and run performance tests or tell me a method of reproducing a performance test so I can try it out myself.