Yuelin Xin1 · Yuheng Liu1 · Xiaohui Xie1 · Xinke Li2

1UC Irvine 2City University of Hong Kong

International Conference on Learning Representations 2026

- 2026-01-27: Initial code release.

Install dependencies:

pip install -r requirements.txtIn this codebase, all 3DGS is processed using the Gaussian class implemented in dataset/gaussiangen.py. You have to use this class to represent 3DGS data in order to run the rest of the codebase directly.

flowchart TB

subgraph row1[" "]

direction LR

A[3D Gaussian Splatting<br/>PLY or Gaussian objects]

B[Sample each Gaussian as<br/>a small colored point set]

A --> B

end

subgraph row2[" "]

direction LR

C[SF-VAE Encoder]

D[Compact embedding<br/>NPZ tokens]

E[SF-VAE Decoder]

C --> D --> E

end

subgraph row3[" "]

direction LR

F[Reconstructed colored<br/>point set]

G[Fit Gaussian parameters<br/>scale, rotation, SH, opacity]

H[3D Gaussian Splatting<br/>for rendering or downstream use]

F --> G --> H

end

row1 --> row2

row2 --> row3

style row1 fill:none,stroke:none

style row2 fill:none,stroke:none

style row3 fill:none,stroke:none

classDef input fill:#eef4ff,stroke:#7aa2f7,stroke-width:1.5px,color:#1f2937,rx:10px,ry:10px

classDef latent fill:#f5efff,stroke:#a78bfa,stroke-width:1.5px,color:#1f2937,rx:10px,ry:10px

classDef output fill:#eefbf3,stroke:#34d399,stroke-width:1.5px,color:#1f2937,rx:10px,ry:10px

class A,B input

class C,D,E latent

class F,G,H output

linkStyle default stroke:#94a3b8,stroke-width:1.5px

You can find some demo code for converting embeddings to 3D Gaussian Splatting format and save it to ply file in the converter.py file. You can implement this in your own code that takes in embeddings predicted by another model, and covert them to Gaussian representations. You can import the Converter class from converter.py to use it for converting embeddings to Gaussians. You will need to load the pre-trained embedding model checkpoint to initialize the Converter class. We have provided two 0th order checkpoints (torch compiled):

checkpoint_sfvae_sh0.pth: baseline checkpointcheckpoint_sfvae_sh0_144.pth: for 4x faster inference with very slightly lower reconstruction quality

You can also run the converter.py script directly to convert between embeddings and 3DGS.:

python converter.py \

--gaussian2emb \ # or --emb2gaussian

--src_path <INPUT_PATH> \

--dist_path <OUTPUT_PATH> We implemented several dataset classes in dataset/ply_data.py for loading 3DGS data from ply files. You may also find a dataset that loads embeddings data from npz files in the same script. You can use these dataset classes to load your own data in ply (3DGS) or npz (embeddings) format.

In addition, you can also find a 3DGS rendering function called visualize_gaussian in the utils/visualization.py file, implemented with gsplat, which can be used to render a given list of Gaussians. You may also use your own rasterization pipeline such as the original diff-gaussian-rasterization codebase.

Training defaults are stored in config/train.yaml. You can launch training directly from that config:

python train_embedding.py --config config/train.yamlYou can still override any config value from the command line. For example:

python train_embedding.py \

--config config/train.yaml \

--num_samples 500000 \

--norm_weight 0.001 \

--grid_dim 12 \

--weight_path <CHECKPOINT_PATH>Optional: resume from a checkpoint.

python train_embedding.py \

--config config/train.yaml \

--resume True \

--weight_path <CHECKPOINT_PATH>@inproceedings{gsembedding2026,

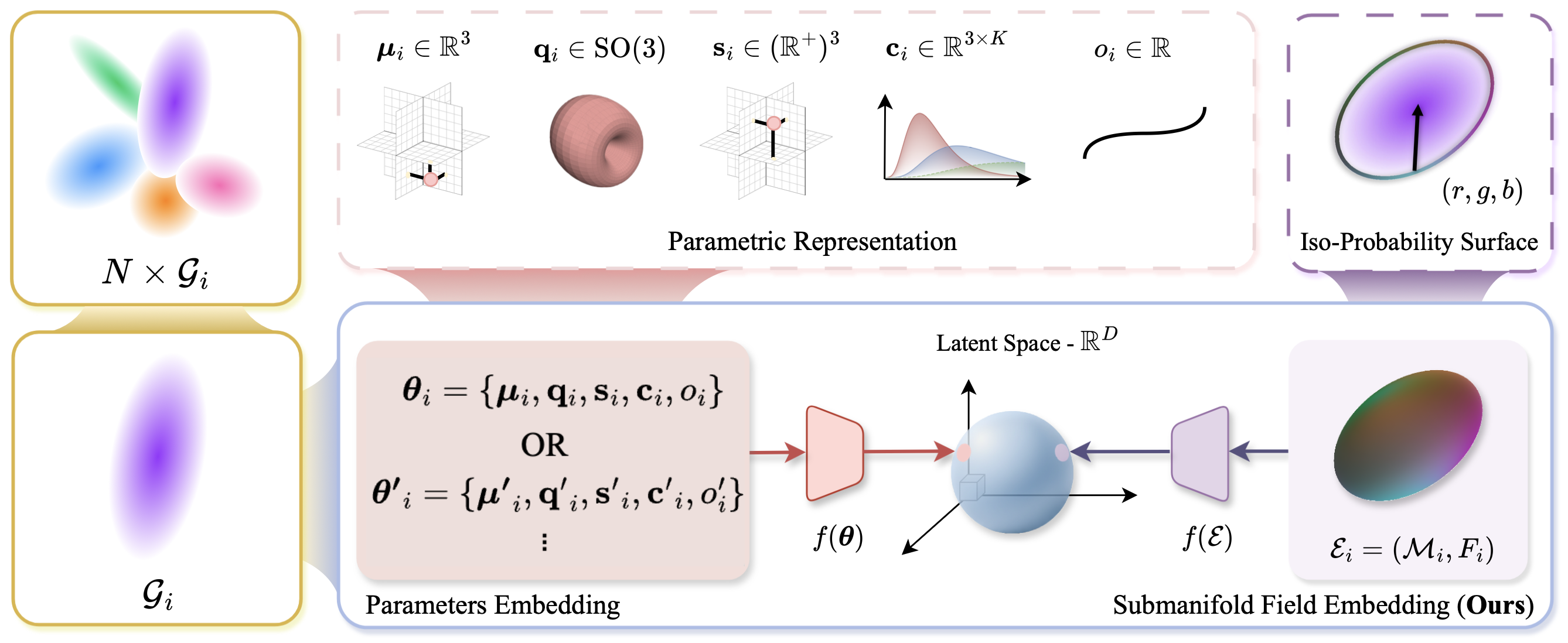

title={Learning Unified Representation of 3D Gaussian Splatting},

author={Yuelin Xin and Yuheng Liu and Xiaohui Xie and Xinke Li},

booktitle={International Conference on Learning Representations},

year={2026}

}

For questions or issues, please open a GitHub issue or contact yuelix1@uci.edu

This project is released under the Apache 2.0 License. See LICENSE for details.