English | 简体中文

Turn AI from one person's tool into an enterprise operating capability.

Quick start · Highlights · How it works · Community · License · Trademark

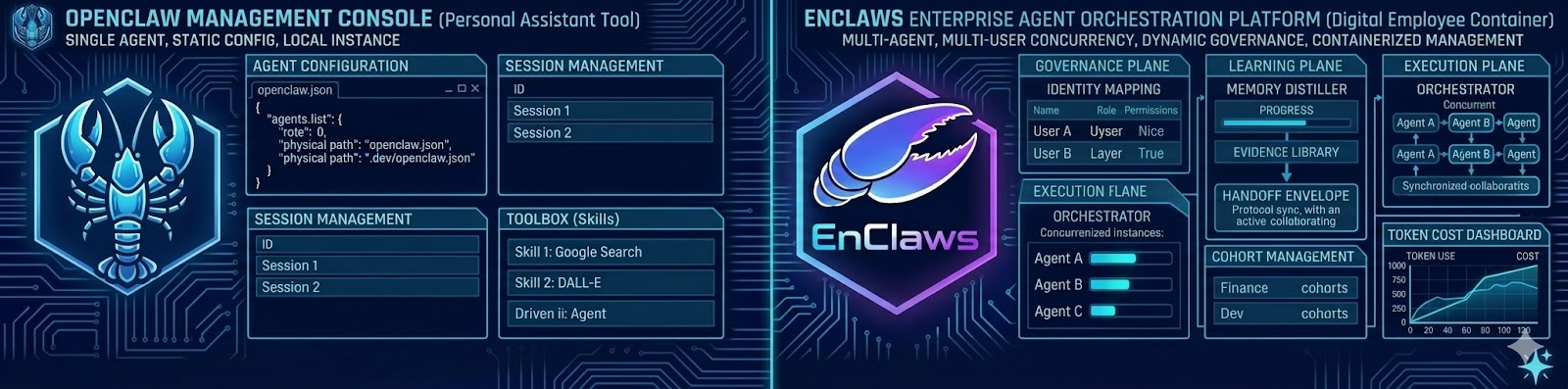

EnClaws is an enterprise AI assistant container platform. It is designed to create, schedule, isolate, upgrade, and audit large numbers of assistant instances across teams, workflows, and business systems.

Where OpenClaw focuses on the personal assistant experience, EnClaws focuses on the enterprise operating environment for digital assistants.

Important

This repository has just been opened. Additional deployment, configuration, and repository documentation will be published as the project expands.

A personal assistant can be powerful for one person. An enterprise has a very different shape.

Enterprises need:

- boundaries between teams, departments, and users

- strict isolation for sensitive context and data

- memory that can exist at industry, company, department, and personal levels

- reusable skills that can spread across many assistants

- management surfaces for status, risk, cost, replay, and auditability

- a platform that can manage large numbers of digital assistants, not a single chat window

In short, enterprises do not just need a smarter assistant. They need a system that can run and govern a digital workforce.

In the Claw world, the split is simple:

- OpenClaw is the personal claw. It is built around the experience of an individual assistant that belongs to one person.

- EnClaws is the enterprise claw. It is built to create, schedule, and manage large numbers of assistant instances so they can take on real work across an organization.

If OpenClaw is the personal operator, EnClaws is the enterprise operating environment.

npm install -g enclaws

enclaws gatewayDownload EnClaws-Setup-x.x.x.exe from Releases, double-click to install. No admin rights required, Node.js runtime included, fully offline.

After installation, open the desktop shortcut "EnClaws" or run enclaws gateway in a new terminal.

curl -fsSL --proto '=https' --tlsv1.2 https://raw.githubusercontent.com/hashSTACS-Global/EnClaws/main/install.sh | bashPrerequisites: Node.js >= 22.12.0 and pnpm.

# 1. Clone the repository

git clone https://github.com/hashSTACS-Global/EnClaws.git

cd EnClaws

# 2. Install dependencies and build

pnpm install

pnpm build

pnpm ui:build # auto-installs UI deps on first run

# 3. Register the enclaws command globally

npm link

# 4. Start the Gateway

enclaws gatewayAfter startup, the Gateway is available at http://localhost:18789.

-

One assistant, many concurrent tasks

EnClaws is designed for concurrent execution. A finance assistant should be able to process reimbursement requests for many employees in parallel instead of becoming a single-file queue. -

Native multi-user isolation

The platform is built for multi-user environments from the start, with isolated context, memory, and execution boundaries for each user. -

Hierarchical memory

Enterprise assistants can reason across multiple layers of knowledge at once: industry memory, company memory, department memory, and personal memory. -

Memory distillation and upgrade

Valuable experience is not meant to remain trapped inside raw logs. It can be captured, distilled into reusable capability artifacts, reviewed, and promoted upward when appropriate. -

Skill sharing and propagation

A strong skill used by one assistant should not stay trapped in one assistant. EnClaws is designed to expose, share, and propagate skills across assistants. -

Audit and state monitoring

Managers need visibility. EnClaws is intended to surface assistant status, task execution, token cost signals, risk signals, and replayable evidence. -

A2A Collaboration as a roadmap direction

Lightweight assistant-to-assistant collaboration is part of the forward direction for EnClaws, with an emphasis on lower token overhead and more efficient data exchange.

Unlike a serial assistant that waits for one instruction to finish before the next begins, EnClaws is designed to support concurrent task execution.

This matters in enterprise workloads. A finance assistant should be able to handle many reimbursement requests at the same time, instead of making every employee stand in the same digital queue.

The design goal is not just speed. It is stable, responsive enterprise service behavior under sustained multi-user demand.

EnClaws is built for multi-user operation from the start.

That means:

- the runtime can distinguish users and execution contexts

- each user can have isolated memory and personalized behavior

- sensitive information is prevented from bleeding across people, teams, or departments

The point is not only convenience. It is operational safety.

Enterprise work rarely belongs to one flat context window.

EnClaws is designed around a layered memory model so assistants can work with multiple kinds of knowledge at once:

- Industry memory for public rules, terms, and regulations

- Company memory for business model, policies, culture, and shared product knowledge

- Department memory for playbooks, workflows, and collaboration rules

- Personal memory for individual habits, preferences, and historical context

This is not one giant mixed brain. It is structured organizational memory.

EnClaws is not meant to blindly synchronize raw memory everywhere.

Instead, the goal is to identify valuable experience, distill it into reusable capability artifacts, review it for desensitization and compliance, and then promote it upward from the personal or team level to department or company scope.

That turns learning into organizational evolution instead of duplicated rework.

A good enterprise platform should let capability travel.

EnClaws is designed around a standardized skill-sharing model so that a skill proven useful in one assistant can be exposed, reused, and propagated to others.

One assistant learning something useful should make the whole system better.

The more capable digital assistants become, the more important observability becomes.

EnClaws is intended to provide a management-facing view of:

- assistant state

- executed instructions

- risk signals

- token consumption and cost visibility

- replayable process, evidence, and responsibility chains

This is how a digital workforce becomes governable instead of mysterious.

A2A Collaboration is part of the forward direction for EnClaws.

The aim is a lightweight inter-container collaboration model where many coordination instructions can be completed through direct protocol exchange rather than repeated full-model interpretation.

That means:

- lower token consumption

- more efficient shared data flow

- multi-assistant cooperation that behaves more like a coordinated team

This belongs in the roadmap section because it is a direction, not a launch-day overclaim.

Users / Teams / Enterprise Systems

│

▼

Assistant Runtime + Control Plane

│

┌──────────┼──────────┬──────────┐

▼ ▼ ▼ ▼

Concurrency Memory Skills Audit

│

▼

Web management panel and enterprise surfaces

A slightly more detailed mental model:

Enterprise users + business systems + work events

│

▼

containerized assistant runtime and scheduler

│

┌──────────┼──────────┬──────────┐

▼ ▼ ▼ ▼

isolation memory skills monitoring

│

▼

evidence, replay, operations, action

┌─────────────────────────────────────────────────────────────────────────┐

│ Client Layer │

│ Web Control UI · CLI / TUI · macOS / iOS / Android │

└────────────────────────────────┬────────────────────────────────────────┘

│

┌────────────────────────────────▼────────────────────────────────────────┐

│ Channel Layer — 41+ Integrations │

│ │

│ Feishu DingTalk WeCom Telegram Discord Slack │

│ WhatsApp Teams Matrix Signal LINE Mattermost ... │

└────────────────────────────────┬────────────────────────────────────────┘

│

┌────────────────────────────────▼────────────────────────────────────────┐

│ Gateway Layer │

│ │

│ ┌─────────────┐ ┌─────────────┐ ┌──────────────────────────────┐ │

│ │ WebSocket │ │ HTTP │ │ Authentication & Authorization│ │

│ │ Server │ │ Server │ │ JWT + 5-Level RBAC │ │

│ └──────┬───────┘ └──────┬──────┘ │ Method-scoped permissions │ │

│ │ │ └──────────────┬───────────────┘ │

│ └─────────┬───────┘ │ │

│ │ │ │

│ ┌────────────────▼────────────────────────────────▼────────────────┐ │

│ │ Tenant Router ──→ Session Resolver ──→ Channel Manager │ │

│ │ Plugin Manager Cron Service │ │

│ └─────────────────────────────┬───────────────────────────────────┘ │

└─────────────────────────────────┼───────────────────────────────────────┘

│

┌─────────────────────────────────▼───────────────────────────────────────┐

│ Core Engine │

│ │

│ ┌──────────────┐ ┌──────────────┐ ┌──────────────────────────┐ │

│ │ Message │ │ Reply │ │ Agent Runner │ │

│ │ Dispatch │ │ Engine │ │ (pi-embedded-runner) │ │

│ └──────┬────────┘ └──────┬───────┘ └──────────┬──────────────┘ │

│ │ │ │ │

│ └──────────┬───────┘ │ │

│ │ │ │

│ ┌─────────────────▼──────────────────────────────▼─────────────────┐ │

│ │ StreamFn Execution Pipeline │ │

│ │ pre-process → LLM call → tool execution → post-process │ │

│ └─────────────────────────────┬────────────────────────────────────┘ │

│ │ │

│ ┌───────────┐ ┌──────────────▼──┐ ┌─────────────────────────────┐ │

│ │ 60+ Tools │ │ 55 Skills │ │ ACP — Concurrent Executor │ │

│ │ │ │ (overridable) │ │ 100+ parallel tasks │ │

│ └────────────┘ └─────────────────┘ └─────────────────────────────┘ │

└─────────────────────────────────┬───────────────────────────────────────┘

│

┌───────────────────┼───────────────────┐

│ │ │

┌─────────────▼──────┐ ┌─────────▼─────────┐ ┌───────▼───────────────────┐

│ LLM Providers │ │ Storage Layer │ │ Observability │

│ │ │ │ │ │

│ Anthropic Claude │ │ PostgreSQL │ │ Interaction Traces │

│ OpenAI GPT-4 │ │ (multi-tenant) │ │ prompt/completion/cost │

│ Google Gemini │ │ SQLite │ │ Audit Logs │

│ DeepSeek │ │ (lightweight) │ │ who/what/when │

│ Qwen │ │ LanceDB │ │ Token Usage Analytics │

│ Moonshot │ │ (vector memory) │ │ 7d/30d trends │

│ Ollama (local) │ │ File System │ │ user/agent/model ranks │

│ │ │ (tenant-isolated)│ │ │

└─────────────────────┘ └────────────────────┘ └───────────────────────────┘

User (Feishu / Discord / ...)

│

│ ① Send message

▼

Channel Adapter ──→ normalize to internal format

│

│ ② Authenticate

▼

Gateway ──→ JWT verification + RBAC check

│

│ ③ Route

▼

Tenant Router ──→ extract tenant from channel metadata

│ load tenant config from PostgreSQL

│

│ ④ Dispatch

▼

Agent Runtime ──→ load SOUL.md + TOOLS.md + MEMORY.md + Skills

│

│ ⑤ Reason

▼

LLM Provider ──→ prompt + context → stream response

│ ↕ tool calls (execute → feed back → re-call)

│

│ ⑥ Reply

▼

Channel Adapter ──→ format reply (text / card / file / image)

│

│ ⑦ Observe

▼

Interaction Tracer ──→ record prompt, completion, tokens, cost

Audit Logger ──→ log event for compliance

EnClaws is not trying to become only a fancier AI toy.

It is also not trying to become only an abstract substrate that a tiny circle of architects can understand.

Its north star is to gradually turn how enterprises operate into an open, collaborative, and evolvable AI system.

EnClaws aims to help define the foundation layer for AI in real enterprise workflows.

If you want AI to move from demos into business operations:

- star the repository

- open issues with concrete operator needs

- participate in Skill Spec and runtime discussions

- help make enterprise AI more reproducible, governable, and shareable

EnClaws stands on the shoulders of open-source giants. We gratefully acknowledge:

-

openclaw/openclaw

The personal assistant foundation that helped define a strong digital assistant paradigm. EnClaws extends that line of thinking toward enterprise-scale containerized operation. -

luolin-ai/openclawWeComzh

Valuable reference work for Enterprise WeCom adaptation and the multi-tenant enterprise IM integration layer.

We remain committed to an open-contract spirit and to improving enterprise AI runtime standards together with the open-source community.

- See CONTRIBUTING.md for contribution guidelines.

- See GOVERNANCE.md for project decision-making and maintainer expectations.

- See CODE_OF_CONDUCT.md for community standards.

- See SECURITY.md for vulnerability reporting.

- See TRADEMARK.md for brand usage rules.

Stay close to releases, operator feedback, and product discussion:

- Feishu group: Join via link

- Discord server: Join via invite

Join on Feishu · Join on Discord

Licensed under Apache License 2.0. See LICENSE.

The source code is open under Apache License 2.0, but the project names, logos, and brand identifiers are reserved.

Apache License 2.0 does not grant trademark rights. For permitted and prohibited brand usage, see TRADEMARK.md.