GenCP is a project funded by ESA (European Space Agency) based on CycleGAN and pix2pix in PyTorch. Please refer to the original README for more information.

The original code is licensed under the BSD 3-Clause License, and all copyright notices have been retained.

Minor changes have been made:

- LPIPS loss option has been added for training, see training section

- Demonstration notebooks for high resolution (HR) and very high resolution (VHR) GenCP images have been added

The scope of this project is to develop a proof of concept prototype prototype aiming to provide Generated Control Point (GenCP) image chips which are computed with generative AI techniques. The Ground Control Points (GCP) are involved in geometric Calibration / Validation activities of remote sensing images and are reference measurements.

The following picture illustrates the GenCP concept: image translation from map to synthetic satellite image

Currently, there are two common approaches to get GCP set:

- Ground-based surveys, mostly using GNSS receivers with mm/cm level accuracy, that are suitable for manual labelling in images

- Extraction from reference raster datasets, often Sentinel-2 mosaics (S2 GRI) or any other VHR images, for which uncertainties are known, and that is suitable for automatic image matching procedures.

In both cases, the reference points are provided to the user along with GCP image chips from an EO Sensor, including vector data and GNSS measurement (if available), or more straightforwardly a full raster reference data to sufficiently describe the point location. ESA is also aiming at the development and sharing of a comprehensive GCP DB, which requires clearly explained point locations together with accurate coordinates that need to be identified in the target image.

However, although the provision of GCP image chips is an efficient approach for user interpretation of a GCP in a target image (labelling), the sharing / distribution among community of original VHR data remain a critical issue because of copyright law / licensing policy.

Furthermore, even if some free ground photos or screenshots are available and so can be utilized as part of GCP description report, the photos or the views are not georeferenced, so GCP should be identified manually. As consequences, it limits considerably the usefulness of GCP data for geometric Cal/Val purposes.

The last but not least critical point is related to reference image chip; radiometric and geometric differences still exist between reference and target images; it results in accuracy loss in matching process.

In order to overcome these issues, an appropriate and ideal solution would be to generate synthetic GCP images, so called GenCP, with main purposes of using them as a geometric raster reference.

In the scope of this project, two AI models have been developed to support two different resolutions:

- VHR with 50 cm images

- HR with 10 m images

The following diagram illustrates the GenCP workflow:

Note: image patches used for training and generated images are 8 bits images.

Below are some examples of HR generated images along with the corresponding reference S2 patches and OSM rasters.

| OSM raster | Reference Image | Generated Image |

|---|---|---|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

VHR examples are illustrated below, from UAV images.

| OSM raster | Reference Image | Generated Image |

|---|---|---|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Two notebooks are available to demonstrates how to generate synthetic HR and VHR images from OSM rasters.

Demonstration data is available for HR and VHR. The notebooks can also be used on users' OSM rasters. Please refer to the data section to see guidelines on how to generate OSM rasters compatible with the models' weights provided.

For HR images, we provide a training dadaset of image pairs (S2 patches and correspond OSM rasters), avaible in Zenodo.

To generate your own OSM rasters, here are some guidelines:

- Use osmnx library to download OSM vectors over your area of interest

- Define OSM feature's color for the rasterization based on the colors used in this project. HR colors and VHR colors and width describe the OSM features used in the GenCP project and their assigned colors (and width) to create OSM rasters. Note: for HR case, CLC 10m raster was used in addition to OSM to fill missing values in OSM rasters, colors used are also defined in HR colors.

- Use GDAL to rasterize OSM vectors

Please refer to the original README for guidelines on training.

For training on an aligned dataset, such as the HR dataset available in Zenodo, the following options and command can be used:

- Use

--datarootto indicate path to training dataset - Use

--nameto name the experiment - Use

--checkpoints_dirto indicate path to checkpoints folder for saving model's weights - Use

--direction BtoAto change input and output domain from B to A (A to B by default) - Use

--LPIPSto replace L1 loss by LPIPS loss - Use

--lambda_L1to change lambda value (with L1 or LPIPS loss)

Set --model option to "pix2pix" to indicate that a Pix2Pix architecture is used.

python train.py --dataroot path/to/dataset --name experiment_name --model pix2pix --LPIPS --checkpoints_dir path/to/checkpoint_dir --direction BtoA

All options to select parameters and hyperparameters values are described in the options folder.

Please refer to the original README for guidelines on testing.

For testing on source images only (map rasters) with the trained GenCP HR model, run the following command:

- Set

--modelto "test" to indicate testing mode - Set

--dataset_modeto "single" to indicate that only OSM rasters will be provided as inputs - Set

--normto "batch" to indicate that batch normalization has been used during training - Set

--netGto "unet_256" to indicate that U-Net 256 was used as backbone for the generator during training

python test.py --dataroot path/to/dataset --name experiment_name --model test --results_dir /path/to/result_dir --checkpoints_dir path/to/checkpoint_dir --dataset_mode single --norm batch --netG unet_256

Generated images by the AI model or not georeferenced. Use the following command to georeference generated images based on corresponding OSM rasters and create a GenCP database:

python GenCP_HR_demo/gencp_georeferencing.py -t "path/to/generated/images" -i "path/to/input/OSM/rasters" -o "path/to/output/genCP_DB"

Quality control is done with KARIOS to evaluate the geometric accuracy of the geo-referenced generated images.

The following figure illustrates geometric error distribution results obtained with KARIOS on a test site (not used for training) for RGB HR generated images. Results show a mean error around 0.7 pixel (7m) and a RMSE around 2.5 pixels (24m) due to outliers, mostly in rural areas.

The following configuration was used:

Confidence value: 0.8

KLT Matching:

- minDistance: 1

- blocksize: 5

- maxCorners: 0

- matching_winsize: 15

- quality_level: 0.1

- xStart: 0

- tile_size: 4000

- laplacian_kernel_size: 7

- outliers_filtering: true

The following figure illustrates geometric error distribution results obtained with KARIOS on a test site (not used for training) for RGB VHR generated images. Results show a mean error around 0.6 pixel and a RMSE around 3 pixels.

The following configuration was used:

KLT Matching:

- minDistance: 1

- blocksize: 25

- maxCorners: 2000000

- matching_winsize: 55

- quality_level: 0.1

- xStart: 0

- tile_size: 5000

- grid_step: 3

- laplacian_kernel_size: 7

- outliers_filtering: true

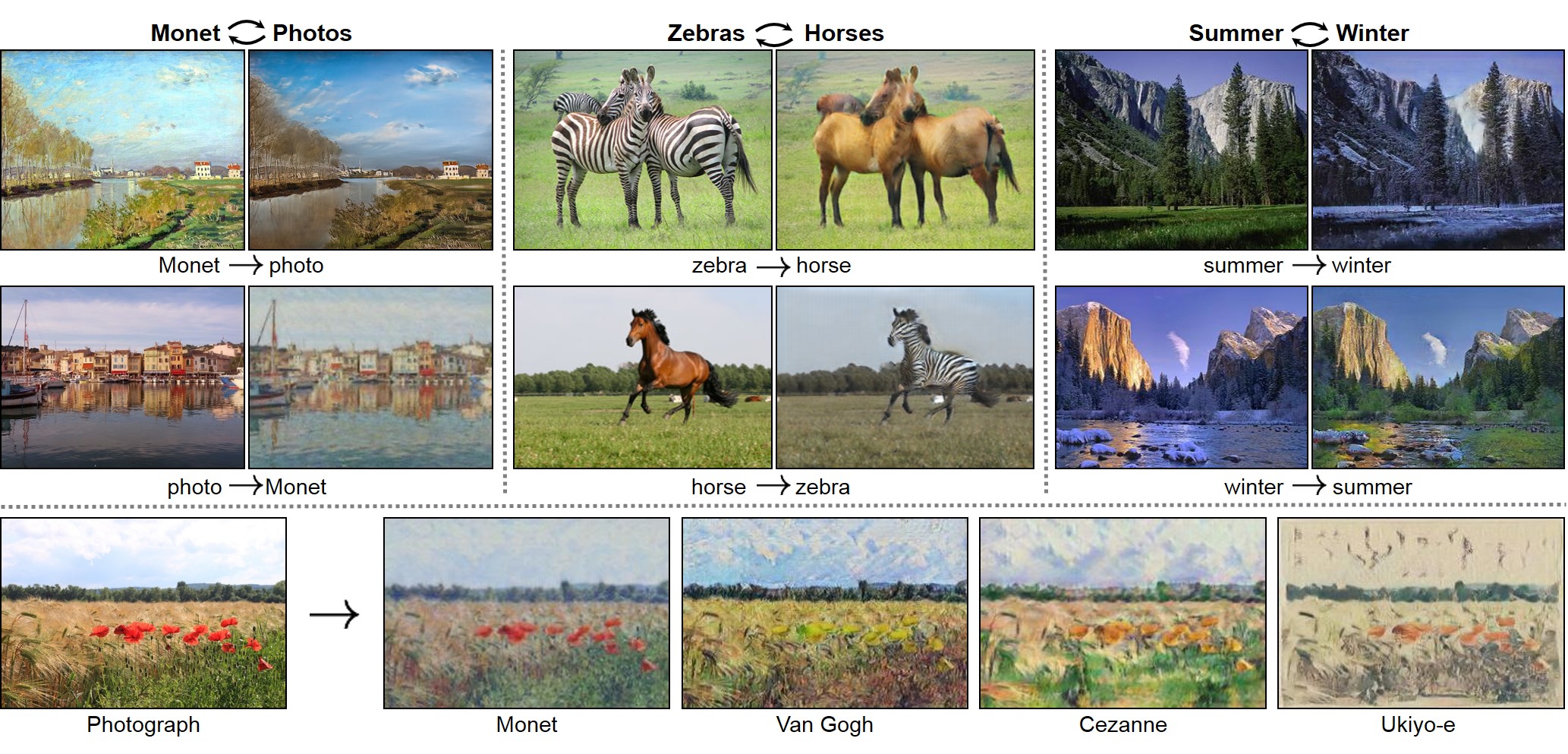

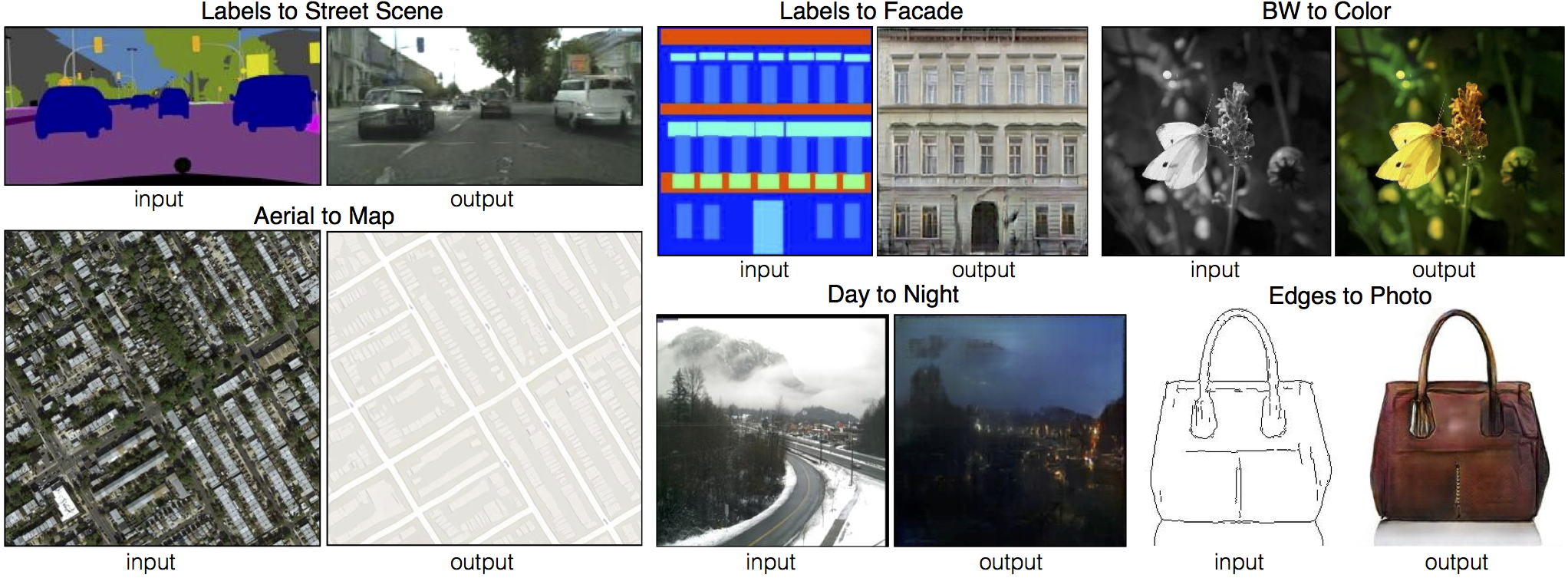

New: Please check out img2img-turbo repo that includes both pix2pix-turbo and CycleGAN-Turbo. Our new one-step image-to-image translation methods can support both paired and unpaired training and produce better results by leveraging the pre-trained StableDiffusion-Turbo model. The inference time for 512x512 image is 0.29 sec on A6000 and 0.11 sec on A100.

Please check out contrastive-unpaired-translation (CUT), our new unpaired image-to-image translation model that enables fast and memory-efficient training.

We provide PyTorch implementations for both unpaired and paired image-to-image translation.

The code was written by Jun-Yan Zhu and Taesung Park, and supported by Tongzhou Wang.

This PyTorch implementation produces results comparable to or better than our original Torch software. If you would like to reproduce the same results as in the papers, check out the original CycleGAN Torch and pix2pix Torch code in Lua/Torch.

Note: The current software works well with PyTorch 1.4. Check out the older branch that supports PyTorch 0.1-0.3.

You may find useful information in training/test tips and frequently asked questions. To implement custom models and datasets, check out our templates. To help users better understand and adapt our codebase, we provide an overview of the code structure of this repository.

CycleGAN: Project | Paper | Torch | Tensorflow Core Tutorial | PyTorch Colab

Pix2pix: Project | Paper | Torch | Tensorflow Core Tutorial | PyTorch Colab

EdgesCats Demo | pix2pix-tensorflow | by Christopher Hesse

If you use this code for your research, please cite:

Unpaired Image-to-Image Translation using Cycle-Consistent Adversarial Networks.

Jun-Yan Zhu*, Taesung Park*, Phillip Isola, Alexei A. Efros. In ICCV 2017. (* equal contributions) [Bibtex]

Image-to-Image Translation with Conditional Adversarial Networks.

Phillip Isola, Jun-Yan Zhu, Tinghui Zhou, Alexei A. Efros. In CVPR 2017. [Bibtex]

pix2pix slides: keynote | pdf, CycleGAN slides: pptx | pdf

CycleGAN course assignment code and handout designed by Prof. Roger Grosse for CSC321 "Intro to Neural Networks and Machine Learning" at University of Toronto. Please contact the instructor if you would like to adopt it in your course.

TensorFlow Core CycleGAN Tutorial: Google Colab | Code

TensorFlow Core pix2pix Tutorial: Google Colab | Code

PyTorch Colab notebook: CycleGAN and pix2pix

ZeroCostDL4Mic Colab notebook: CycleGAN and pix2pix

[Tensorflow] (by Harry Yang), [Tensorflow] (by Archit Rathore), [Tensorflow] (by Van Huy), [Tensorflow] (by Xiaowei Hu), [Tensorflow2] (by Zhenliang He), [TensorLayer1.0] (by luoxier), [TensorLayer2.0] (by zsdonghao), [Chainer] (by Yanghua Jin), [Minimal PyTorch] (by yunjey), [Mxnet] (by Ldpe2G), [lasagne/Keras] (by tjwei), [Keras] (by Simon Karlsson), [OneFlow] (by Ldpe2G)

[Tensorflow] (by Christopher Hesse), [Tensorflow] (by Eyyüb Sariu), [Tensorflow (face2face)] (by Dat Tran), [Tensorflow (film)] (by Arthur Juliani), [Tensorflow (zi2zi)] (by Yuchen Tian), [Chainer] (by mattya), [tf/torch/keras/lasagne] (by tjwei), [Pytorch] (by taey16)

- Linux or macOS

- Python 3

- CPU or NVIDIA GPU + CUDA CuDNN

- Clone this repo:

git clone https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix

cd pytorch-CycleGAN-and-pix2pix- Install PyTorch and 0.4+ and other dependencies (e.g., torchvision, visdom and dominate).

- For pip users, please type the command

pip install -r requirements.txt. - For Conda users, you can create a new Conda environment using

conda env create -f environment.yml. - For Docker users, we provide the pre-built Docker image and Dockerfile. Please refer to our Docker page.

- For Repl users, please click

.

- For pip users, please type the command

- Download a CycleGAN dataset (e.g. maps):

bash ./datasets/download_cyclegan_dataset.sh maps- To view training results and loss plots, run

python -m visdom.serverand click the URL http://localhost:8097. - To log training progress and test images to W&B dashboard, set the

--use_wandbflag with train and test script - Train a model:

#!./scripts/train_cyclegan.sh

python train.py --dataroot ./datasets/maps --name maps_cyclegan --model cycle_ganTo see more intermediate results, check out ./checkpoints/maps_cyclegan/web/index.html.

- Test the model:

#!./scripts/test_cyclegan.sh

python test.py --dataroot ./datasets/maps --name maps_cyclegan --model cycle_gan- The test results will be saved to a html file here:

./results/maps_cyclegan/latest_test/index.html.

- Download a pix2pix dataset (e.g.facades):

bash ./datasets/download_pix2pix_dataset.sh facades- To view training results and loss plots, run

python -m visdom.serverand click the URL http://localhost:8097. - To log training progress and test images to W&B dashboard, set the

--use_wandbflag with train and test script - Train a model:

#!./scripts/train_pix2pix.sh

python train.py --dataroot ./datasets/facades --name facades_pix2pix --model pix2pix --direction BtoATo see more intermediate results, check out ./checkpoints/facades_pix2pix/web/index.html.

- Test the model (

bash ./scripts/test_pix2pix.sh):

#!./scripts/test_pix2pix.sh

python test.py --dataroot ./datasets/facades --name facades_pix2pix --model pix2pix --direction BtoA- The test results will be saved to a html file here:

./results/facades_pix2pix/test_latest/index.html. You can find more scripts atscriptsdirectory. - To train and test pix2pix-based colorization models, please add

--model colorizationand--dataset_mode colorization. See our training tips for more details.

- You can download a pretrained model (e.g. horse2zebra) with the following script:

bash ./scripts/download_cyclegan_model.sh horse2zebra- The pretrained model is saved at

./checkpoints/{name}_pretrained/latest_net_G.pth. Check here for all the available CycleGAN models. - To test the model, you also need to download the horse2zebra dataset:

bash ./datasets/download_cyclegan_dataset.sh horse2zebra- Then generate the results using

python test.py --dataroot datasets/horse2zebra/testA --name horse2zebra_pretrained --model test --no_dropout-

The option

--model testis used for generating results of CycleGAN only for one side. This option will automatically set--dataset_mode single, which only loads the images from one set. On the contrary, using--model cycle_ganrequires loading and generating results in both directions, which is sometimes unnecessary. The results will be saved at./results/. Use--results_dir {directory_path_to_save_result}to specify the results directory. -

For pix2pix and your own models, you need to explicitly specify

--netG,--norm,--no_dropoutto match the generator architecture of the trained model. See this FAQ for more details.

Download a pre-trained model with ./scripts/download_pix2pix_model.sh.

- Check here for all the available pix2pix models. For example, if you would like to download label2photo model on the Facades dataset,

bash ./scripts/download_pix2pix_model.sh facades_label2photo- Download the pix2pix facades datasets:

bash ./datasets/download_pix2pix_dataset.sh facades- Then generate the results using

python test.py --dataroot ./datasets/facades/ --direction BtoA --model pix2pix --name facades_label2photo_pretrained-

Note that we specified

--direction BtoAas Facades dataset's A to B direction is photos to labels. -

If you would like to apply a pre-trained model to a collection of input images (rather than image pairs), please use

--model testoption. See./scripts/test_single.shfor how to apply a model to Facade label maps (stored in the directoryfacades/testB). -

See a list of currently available models at

./scripts/download_pix2pix_model.sh

We provide the pre-built Docker image and Dockerfile that can run this code repo. See docker.

Download pix2pix/CycleGAN datasets and create your own datasets.

Best practice for training and testing your models.

Before you post a new question, please first look at the above Q & A and existing GitHub issues.

If you plan to implement custom models and dataset for your new applications, we provide a dataset template and a model template as a starting point.

To help users better understand and use our code, we briefly overview the functionality and implementation of each package and each module.

You are always welcome to contribute to this repository by sending a pull request.

Please run flake8 --ignore E501 . and python ./scripts/test_before_push.py before you commit the code. Please also update the code structure overview accordingly if you add or remove files.

If you use this code for your research, please cite our papers.

@inproceedings{CycleGAN2017,

title={Unpaired Image-to-Image Translation using Cycle-Consistent Adversarial Networks},

author={Zhu, Jun-Yan and Park, Taesung and Isola, Phillip and Efros, Alexei A},

booktitle={Computer Vision (ICCV), 2017 IEEE International Conference on},

year={2017}

}

@inproceedings{isola2017image,

title={Image-to-Image Translation with Conditional Adversarial Networks},

author={Isola, Phillip and Zhu, Jun-Yan and Zhou, Tinghui and Efros, Alexei A},

booktitle={Computer Vision and Pattern Recognition (CVPR), 2017 IEEE Conference on},

year={2017}

}

contrastive-unpaired-translation (CUT)

CycleGAN-Torch |

pix2pix-Torch | pix2pixHD|

BicycleGAN | vid2vid | SPADE/GauGAN

iGAN | GAN Dissection | GAN Paint

If you love cats, and love reading cool graphics, vision, and learning papers, please check out the Cat Paper Collection.

Our code is inspired by pytorch-DCGAN.